Every second of load time costs you. During a traffic spike, an uncached origin server can buckle under the pressure, and CDN caching can offload 70% to 90% of that traffic before it ever reaches your Gcore infrastructure. For a user in New York pulling content from a server in Singapore, the round-trip penalty is brutal. Edge caching cuts that round-trip time by up to 80%, turning a sluggish experience into one that feels instant.

The stakes are real whether you're running an e-commerce store, a media platform, or a SaaS product. Without effective caching, every visitor hits your origin server directly, bandwidth costs climb, and slow load times quietly drive users away. With it, static assets like images and CSS can hit 95%+ cache effectiveness, and well-optimized setups routinely achieve cache hit ratios above 90%.

This guide covers everything you need to make CDN caching work for you: how it actually functions, what content caches well (and what doesn't), how to configure rules and TTL values effectively, and the best practices that separate a high-performing cache from one that causes stale content headaches.

What is CDN caching?

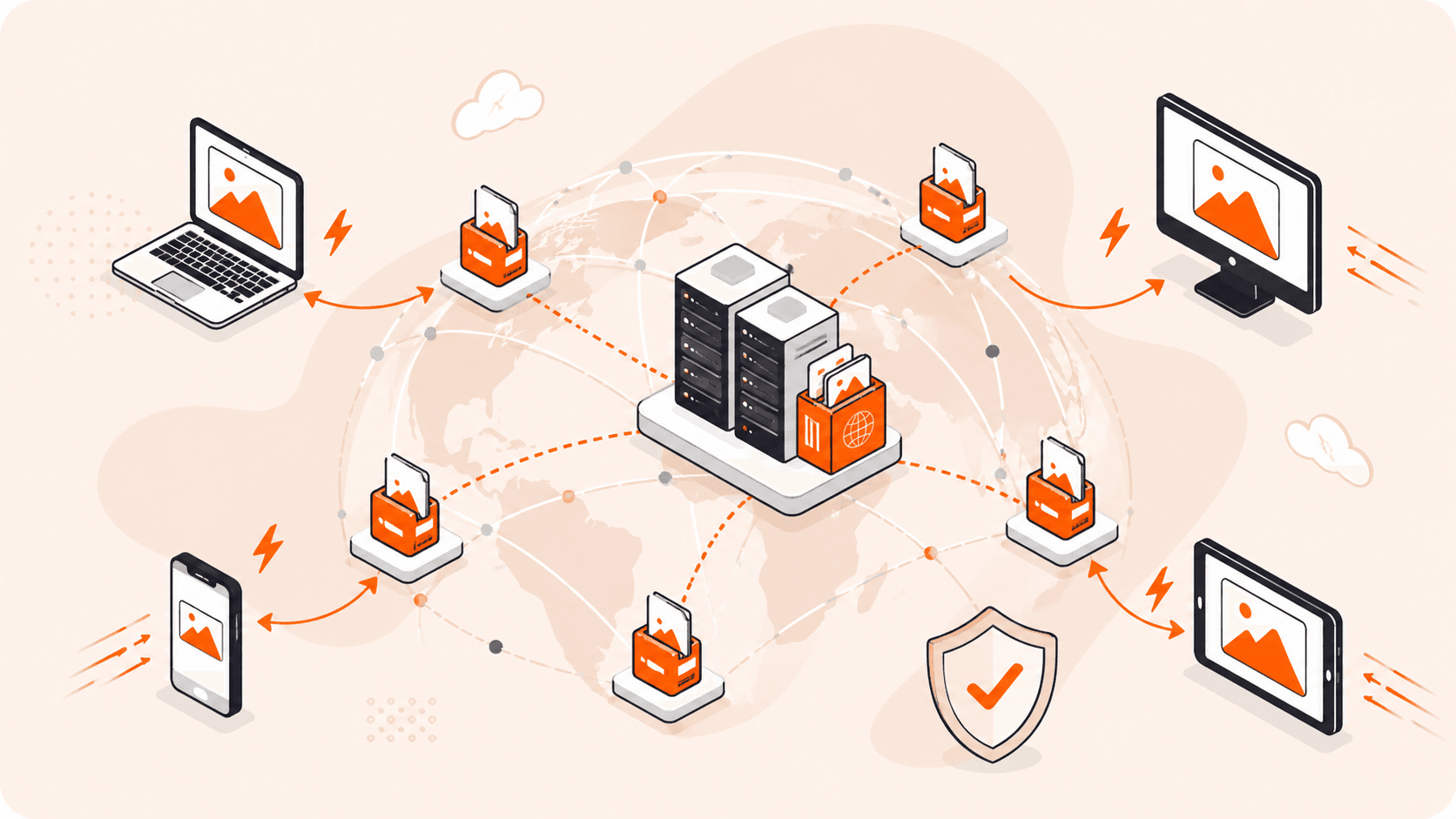

CDN caching stores copies of your website's content (images, videos, JavaScript, CSS, HTML) on edge servers distributed across the globe, so users get files from a nearby server instead of your origin. Think of it like a local warehouse network: instead of shipping every order from one central facility, you stock goods closer to your customers.

When a user requests a file, the edge server checks if it has a cached copy. A cache hit serves it instantly; a cache miss fetches it from your origin, caches it, then delivers it. With well-configured caching rules, cache hit ratios above 90% are common for static content, and CDN caching can offload 70% to 90% of traffic from your origin during peak loads.

The freshness of cached content is controlled by HTTP Cache-Control headers, which set a Time to Live (TTL), how long a file stays valid before the edge server checks for an update.

In simple terms: CDN caching saves copies of your website's files on servers around the world, so visitors load your content from nearby instead of waiting for it to travel from a single distant server.

How does CDN caching work?

CDN caching intercepts each user request at the nearest edge server, called a Point of Presence (PoP), before it ever reaches your origin.

Here's how the sequence plays out. A user in London requests your homepage. The CDN routes that request to the closest PoP, maybe 10 miles away instead of 5,000. If the edge server has a cached copy, it responds immediately. That's your cache hit. If not, it fetches the file from your origin, stores a local copy, and delivers it. Every subsequent request from that region gets served from the edge.

So what controls all of this? Your Cache-Control headers. You set a TTL per file type: longer for static assets like images that rarely change, shorter for pages that update frequently. The edge server honors that TTL, serving cached content until it expires. When it does, the server revalidates with your origin and refreshes its copy.

Push an update before the TTL expires? You can manually purge the cache to force edge servers to fetch fresh content right away.

In simple terms: Gcore CDN intercepts requests at the nearest server, serves cached files instantly, and only contacts your origin when it doesn't have a valid copy, or when you tell it to refresh.

What are the key benefits of CDN caching?

CDN caching delivers real, measurable advantages, for your users and your infrastructure costs. Here's what you actually get.

- Faster load times: Edge servers serve cached files from locations close to your users, cutting round-trip travel time dramatically. Sites with globally distributed caches can reduce latency by 50% to 70% compared to serving everything from a single origin.

- Reduced origin load: When edge servers handle requests directly, your origin only receives traffic for cache misses. During peak traffic, CDN caching can offload 70% to 90% of requests, so your origin handles a fraction of what it would otherwise.

- Better scalability: Cached content gets served from dozens or hundreds of edge locations simultaneously, so your infrastructure handles traffic spikes without straining a single server. Think of a media platform serving millions of concurrent users, edge caching distributes that load spatially instead of hammering one origin.

- Lower bandwidth costs: Serving files from edge servers instead of your origin reduces the volume of data traveling across long-haul network paths. Distributed caching typically delivers bandwidth savings of 60% to 80%.

- Higher cache hit ratios: Static assets like images, CSS, and JavaScript files achieve 95%+ cache effectiveness when you configure TTLs correctly. A high hit ratio (above 80%) means most users never trigger an origin fetch at all.

- Improved availability: If your origin slows down or goes briefly offline, edge servers can keep serving cached content to your users. It's not a full failover solution, but it buys you time and keeps pages loading.

- Reduced security exposure: Because all traffic flows through the CDN's edge servers, your origin's IP address isn't directly exposed in DNS, shrinking its direct attack surface. Cached content also means fewer connections reaching your origin during normal traffic.

| Term | What it does | Best for |

|---|---|---|

| Faster load times | Cuts latency by serving from nearby edge servers | Global audiences, media-heavy sites |

| Reduced origin load | Offloads 70-90% of requests to edge | High-traffic apps, peak events |

| Better scalability | Distributes load across edge locations | Traffic spikes, viral content |

| Lower bandwidth costs | Saves 60-80% by reducing origin transfers | Video, image-heavy platforms |

| Higher cache hit ratios | 95%+ effectiveness for static assets | E-commerce, static site delivery |

| Improved availability | Serves cached content during origin slowdowns | Business-critical sites |

| Reduced security exposure | Hides origin IP behind CDN layer | Any public-facing web property |

What types of content can be cached by a CDN?

CDNs can cache a wide range of content types. What caches well, though, comes down to two things: how often the content changes and whether every user gets the same response.

Static assets are the sweet spot. Images, CSS files, JavaScript bundles, fonts, PDFs, these don't change between requests, so they cache cleanly and stay valid for a long time. With proper TTL configuration, you're looking at 95%+ cache effectiveness for these assets.

Video and audio files also cache well. Streaming platforms cache media files regionally so users get fast, buffer-free playback without every request hitting a central origin. For large files, that makes a real difference in both performance and origin load.

Some content sits in the middle. Product listings, category pages, and news articles change occasionally, not constantly. You can cache these for minutes or hours, then purge them when the underlying data updates. Shorter TTLs, but still worth caching.

What doesn't work? Personalized content, shopping cart data, authenticated user sessions, anything generated uniquely per request. Serving someone's account dashboard from a shared edge cache would expose private data to other users. For these, requests need to go straight to your origin.

Here's the practical rule: if the response is identical for every user who requests it, it's a good cache candidate. If it depends on who's asking, keep it off the cache.

What are the main CDN caching limitations and challenges?

CDN caching isn't a silver bullet. There are real constraints and failure points that can reduce its effectiveness or create operational headaches. Here's what you're actually dealing with.

- Cache misses: If a user requests content that isn't on the nearest edge server, that request travels all the way back to your origin, adding latency instead of cutting it. This hits hardest with low-traffic content that gets requested so infrequently it expires before anyone asks for it again.

- TTL misconfiguration: Set your TTL too long and users get stale content after you've pushed an update. Too short and your cache hit ratio tanks, dumping more load back on your origin. Getting it right for each content type takes deliberate tuning, not guesswork.

- Dynamic content limitations: Personalized pages, authenticated sessions, real-time data, none of it can safely live in a shared cache. Those requests always bypass the CDN cache and are proxied straight to your origin. If your workload is heavily dynamic, you're going to see limited caching benefit.

- Cache invalidation complexity: Purging stale content sounds straightforward. It's not. Coordinating invalidation across hundreds of edge servers takes time, and if a purge doesn't propagate instantly after a deployment, some users will still see the old version, sometimes for minutes.

- Geographic cache distribution gaps: Not every CDN has edge servers everywhere. If your users are in a region with no nearby Point of Presence (PoP), they're still experiencing high latency even with caching enabled. PoP density matters just as much as your caching logic.

- Security risks from misconfigured rules: Cache the wrong content, a page with user-specific data, for example, and you risk exposing private information to the wrong people. Without careful cache rules, sensitive responses can end up stored and served to unintended audiences.

- Origin dependency during cold starts: A freshly deployed edge server, or a cache that's been fully purged, has nothing stored yet. Every request is a miss until the cache warms up, which temporarily sends full traffic straight back to your origin.

- Bandwidth costs for cache misses: CDNs cut bandwidth costs when hit ratios are high. But if your content is highly dynamic or your TTLs are too aggressive, miss rates climb, and you're paying for CDN infrastructure without getting the full performance benefit you expected.

- Versioning and cache busting overhead: To force browsers and edge servers to fetch updated files, you need to change file names or append version strings to URLs. Without a solid versioning plan, deployments can leave users stuck with cached old assets even after you've pushed fixes.

| Challenge | What it does | Best for |

|---|---|---|

| Cache misses | Sends requests to origin instead of edge | High-traffic, frequently requested content |

| TTL misconfiguration | Delivers stale or under-cached content | Content with predictable update schedules |

| Dynamic content limits | Bypasses cache for personalized responses | Static or semi-static page types |

| Cache invalidation complexity | Delays stale content removal across PoPs | Sites with infrequent, controlled updates |

| Geographic coverage gaps | Increases latency in underserved regions | Globally distributed audiences |

| Misconfigured security rules | Risks exposing private cached responses | Public, non-authenticated content only |

| Cold start misses | Spikes origin load after purge or deploy | Pre-warmed or gradually rolled-out caches |

| High miss bandwidth costs | Reduces cost savings from CDN investment | High cache hit ratio workloads |

| Versioning overhead | Requires URL changes to bust stale caches | Assets with clear release versioning |

How to configure CDN caching rules effectively?

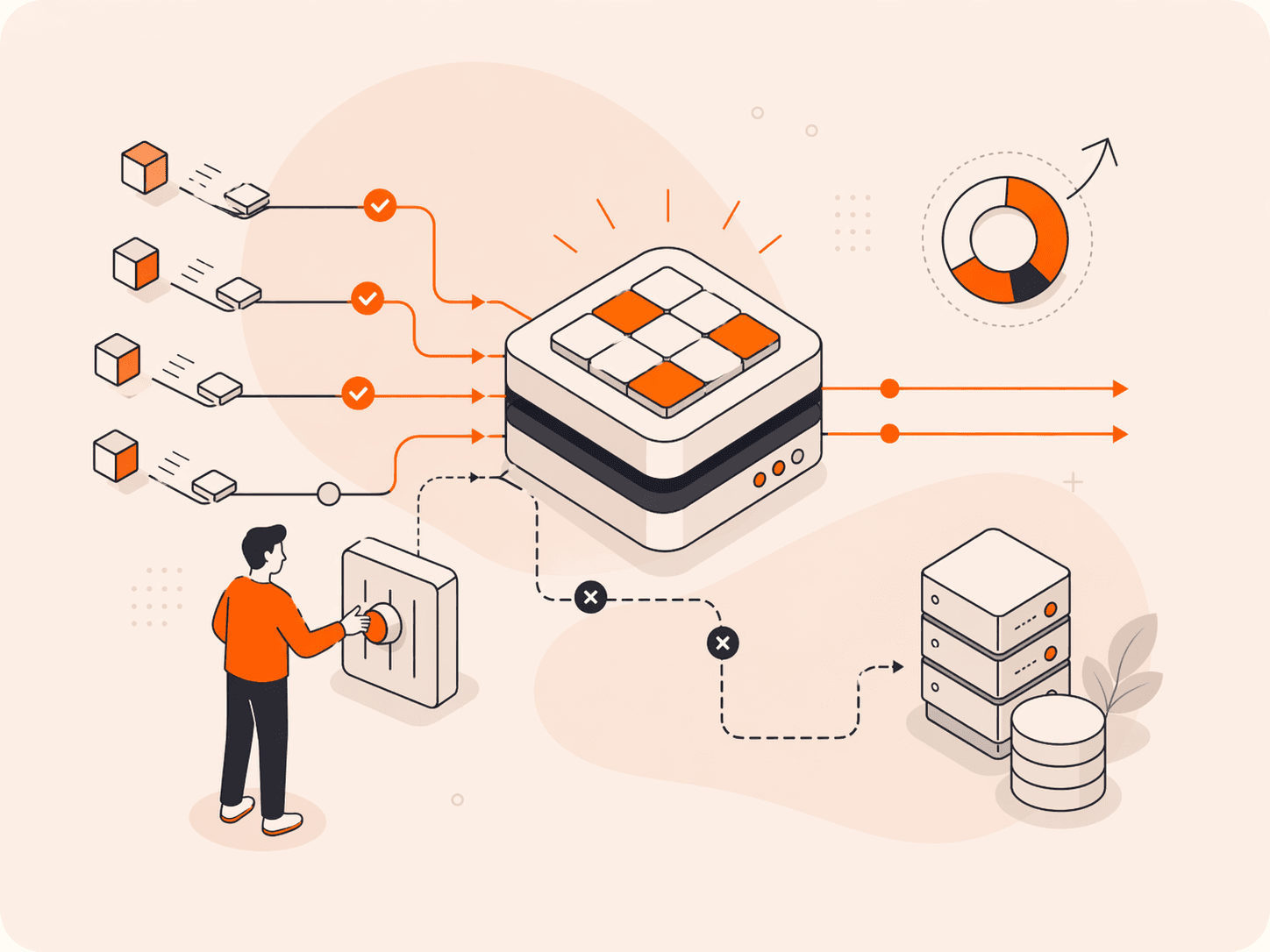

Effective CDN caching comes down to matching cache behavior to your content type, then tuning TTL values and path-based rules until your hit ratio climbs where it needs to be.

- Start by auditing your content types. Separate your assets into three buckets: static files (images, CSS, JavaScript), semi-static pages (product listings, blog posts), and dynamic content (user dashboards, checkout flows). Every caching decision flows from this audit, because each category needs completely different logic.

- Set long TTLs for immutable static assets. Versioned JavaScript bundles and logos don't change between deployments, so there's no reason to fetch them repeatedly. Assign TTLs of 30 days or longer. Done right, static assets routinely hit cache ratios above 90%, which means your origin barely sees those requests.

- Use shorter TTLs for semi-static content. Pages that update daily or weekly need TTLs measured in hours, not weeks. Somewhere between one and 24 hours balances freshness against hit ratio for this content type.

- Define path-based caching rules. Apply rules using URL patterns like `/images/` or `/static/`, or file extensions like `.css` and `.js`. Path-based rules give you precise control without blanket policies that accidentally cache the wrong responses.

- Exclude dynamic and authenticated content explicitly. Add rules that bypass the cache entirely for paths like `/account/*`, `/cart`, or `/checkout`. If you don't do this explicitly, a misconfigured rule can cache user-specific responses and serve them to the wrong person. That's a serious problem.

- Add cache-busting through file versioning. Append version strings or content hashes to static asset filenames, so `app.js` becomes `app.v2.js`. This forces edge servers and browsers to fetch the updated file without waiting for TTL expiration.

- Configure purge triggers for content updates. Set up automated purge calls whenever you deploy site changes. Don't rely on TTL expiration alone. Deployment-triggered purging ensures users get fresh content immediately after an update.

- Monitor your cache hit ratio regularly. Above 80% is a solid baseline; above 90% is the target for static-heavy sites. If your ratio drops, check for overly short TTLs, missing path rules, or a spike in dynamic requests bypassing the cache.

The key thing to remember: there's no single configuration that works for every site. Start with conservative rules, measure your hit ratio, then iterate. Small TTL adjustments and precise path patterns make a bigger difference than most people expect.

What are the best practices for CDN caching?

Getting CDN caching right comes down to a handful of core techniques. Here's what actually makes the difference between a cache that performs well and one that quietly causes problems.

- Match TTL to content lifecycle: A logo or versioned CSS file isn't changing anytime soon, so long TTLs make sense. But for sale prices or inventory counts, your TTL should reflect how often that data actually changes. Don't cache something for 24 hours if it updates every 30 minutes.

- Use stale-while-revalidate: This directive lets edge servers serve cached content immediately while fetching a fresh copy in the background. Users don't wait during revalidation, and your hit ratio stays high even as content updates. It's one of the easiest wins you can get.

- Normalize cache keys: Query strings, cookies, and headers can fragment your cache into thousands of near-identical entries. Strip or ignore parameters that don't affect the response. A tracking parameter like `?utm_source=email` shouldn't create a separate cache entry from the same URL without it.

- Set Vary headers carefully: The `Vary` header tells edge servers to cache separate versions based on request headers like `Accept-Encoding` or `Accept-Language`. That's useful when content genuinely differs, but overuse it and you'll fragment your cache fast.

- Cache at the right layer: Edge servers handle geographic distribution well, but some content benefits from regional caching closer to your origin. Know where each cache layer sits and what it's responsible for.

- Test cache behavior in staging first: Deploy caching rule changes to a staging environment before production. A rule that accidentally caches a checkout page is hard to recover from quickly, even with purging. Don't skip this step.

- Log and analyze cache miss patterns: Cache misses aren't just a performance signal. They tell you which rules are missing or misconfigured. Regular miss analysis reveals gaps faster than hit ratio alone.

- Respect security boundaries: Never cache responses that include authentication tokens, session data, or personalized content. A `Cache-Control: private` header on sensitive responses keeps them off shared edge servers entirely.

| Practice | What it does | Best for |

|---|---|---|

| Match TTL to content lifecycle | Aligns cache duration with update frequency | Mixed static/flexible sites |

| Use stale-while-revalidate | Serves cached content during background refresh | Frequently updated pages |

| Normalize cache keys | Prevents cache fragmentation from query strings | Sites with heavy URL parameterization |

| Set Vary headers carefully | Controls version splitting by request header | Multilingual or compressed content |

| Cache at the right layer | Assigns content to the correct cache tier | Multi-region architectures |

| Test in staging first | Catches rule errors before production impact | Any rule change or deployment |

| Log cache miss patterns | Identifies missing or broken cache rules | Ongoing performance tuning |

| Respect security boundaries | Keeps private data off shared edge servers | Authenticated or personalized content |

How can Gcore help with CDN caching?

Gcore's global edge network spans 180+ points of presence, putting cached content close to your users wherever they are. That geographic reach means cache hits happen at the edge, not after a round trip to your origin.

With Gcore's CDN, you get granular TTL controls, path-based caching rules, and instant purge capabilities so you can tune caching behavior per content type without touching your origin configuration. Cache hit ratios above 90% are achievable for static asset-heavy sites. Origin offload during traffic spikes can reach 70% to 90%, which matters when you're handling a product launch or a live event.

Explore Gcore CDN at gcore.com/cdn.

Frequently asked questions

What is the difference between CDN caching and browser caching?

Browser caching stores files privately on your device and persists across sessions, while CDN caching stores copies on globally distributed edge servers shared across all users. With CDN caching, you get centralized control over TTLs and purging, which makes it far more scalable.

How long does a CDN cache content for?

A CDN caches content for as long as the Time to Live (TTL) value specifies. That can range from a few seconds for flexible pages to a year or more for static assets like images and CSS. You control this through Cache-Control headers, so immutable files can get very long TTLs (one year, for example) while frequently updated content expires quickly.

When a cached file's TTL expires, the edge server fetches a fresh copy from the origin on the next request, caches it, and serves it. If content changes before the TTL runs out, you can also trigger this early by manually purging specific files or paths.

Does CDN caching work for flexible or personalized content?

CDN caching works well for static assets, but it's not a great fit for personalized or frequently changing content. Things like user dashboards, shopping carts, or real-time data should bypass the cache entirely, otherwise you risk serving the wrong data to the wrong person.

How does CDN caching improve website performance?

CDN caching cuts latency by serving content from edge servers close to your users instead of routing every request back to the origin. That alone can reduce round-trip times by up to 80% for distant locations. During traffic spikes, it offloads up to 90% of requests from your origin server, keeping your site fast and stable under pressure.

What is cache poisoning and how can it be prevented?

Cache poisoning happens when an attacker tricks an edge server into storing malicious or incorrect content, which then gets served to every user who requests it. You can mitigate it by validating and sanitizing all inputs that influence cached responses, stripping unrecognized headers, and setting strict cache-key rules so only trusted request parameters determine what gets cached.

Do I need to configure caching rules manually with a CDN?

Most CDNs apply default caching rules automatically, but you'll get much better results by configuring them yourself. Custom rules, like longer TTLs for static images and shorter ones for frequently updated pages, can push your cache hit ratio above 90%, slashing origin load and improving load times for every user.

Related articles

Subscribe to our newsletter

Get the latest industry trends, exclusive insights, and Gcore updates delivered straight to your inbox.