- Home

- Developers

- Introduction to KUDO: Automate Day-2 Operations (I)

Try Gcore Cloud

Try for freeOperators are one of the most powerful tools when you are working with Kubernetes, especially when you are in a scenario where Stateful conditions are required like ordered and automated rolling updates, or graceful deployment/deletion and scaling/termination. The problem, however, is writing and building Operators require deep knowledge of Kubernetes internal and a lot lines of code.

Using Kubebuilder requires writing thousands lines of controller code in GO and existing implementations often don’t cover the entire lifecycle. You can also go for Operator Framework in order to use Ansible or Helm charts but both have some limitations.

KUDO (Kubernetes Universal Declarative Operator) is an open-source toolkit that makes it easy to build Operators using YAML. Additionally, it provides a set of pre-built Operators, that you can use out-of-the-box or easily customize to help you standardizing operations.

Some of the reasons to try KUDO are:

- Provides abstractions for sequencing lifecycle operations using Kubernetes objects and plans (a kind of runbook).

- You can reuse and extend previous base Operators for custom Operators.

- Provides a kubectl plugin, so you can use ‘kubectl kudo’ to manage, deploy and debug all your workloads.

- Workloads are managed as CRDs which helps to keep everything in your repository with versioning.

- Existing Operators can be managed by KUDO.

How KUDO works?

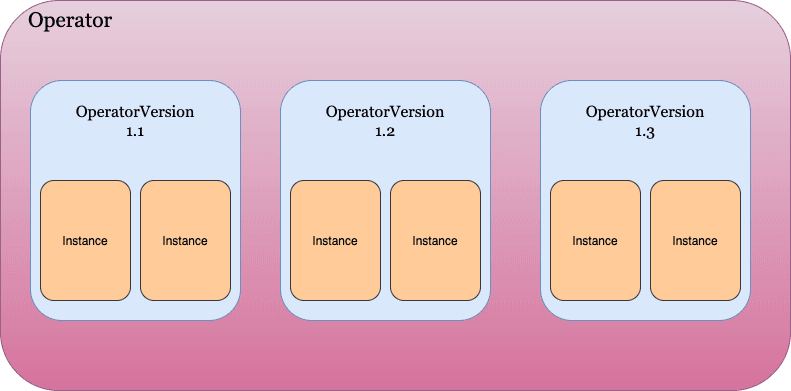

KUDO uses different objects to handle workloads: Operator, OperatorVersion and Instance.

- Operator is being represented by a CRD object and is the high level description of a deployable service.

- OperatorVersion represents implementation of an Operator’s specific version of a deployable application. It contains objects, plans and parameters.

- Instance is an application instantiation to an OperatorVersion. Once created, it renders all parameters in templates such as services, pods or StatefulSets. You can create multiple instances of an OperatorVersion on your Kubernetes cluster.

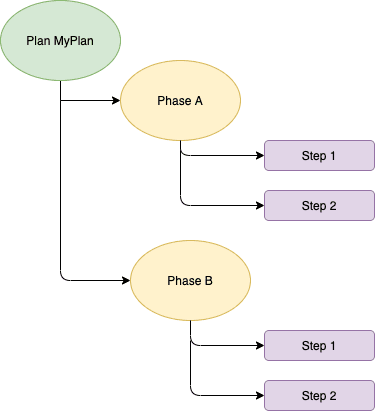

How Kudo orchestrates ordered tasks?

Kudo uses plans to orchestrate tasks through phases and steps using a structured runbook. Phases and steps can be run serial or parallel depending on the needs of your application. Some usual plans would be deploy, backup, restore or upgrade.

Let us take an example of a couple of plans from a MySQL Operator. Here is an extract of the YAML definition file:

plans: deploy: strategy: serial phases: - name: deploy strategy: serial steps: - name: deploy tasks: - deploy - name: init tasks: - init - name: cleanup tasks: - cleanup backup: strategy: serial phases: - name: backup strategy: serial steps: - name: pv tasks: - pv - name: backup tasks: - backup - name: cleanup tasks: - backup-cleanupAs you can see above two different plans are defined: deploy and backup. Both are using a serial strategy and they are executing tasks for any specific step. For the plan named ‘backup’ we are going to create a PVC for backup purposes, then run the backup job and finally doing a cleanup task.

KUDO Repositories

One of the main advantages of using KUDO is deploying prebuilt Operators by official maintainers. You can find those on Github like these:

- Apache Cassandra

- Kafka

- MySQL

- Elastic

- Redis

- Apache Zookeeper

- Apache Spark

Overview of the Architecture

The architecture diagram below helps to understand concepts explained above. We have a CLI to get Operators based on YAML from a repository, and also to manage our workload on the Kubernetes cluster. Kudo Controller takes care of all KUDO CRDs which are tied to objects. Also, we can see the relationship and inheritance between Operators, OperatorVersions and Instances.

Source: D2iQ

There are some cool features in the roadmap like Dynamic CRDs, Operator Dependencies and Pipe Tasks which will turn out to be a more powerful and useful tool for our Operator workloads.

Just keep tuned as we are going to explain you how to install KUDO, deploy and manage KUDO workloads in a future hands-on article.

Try Gcore Cloud

Try for freeRelated articles

Pre-configure your dev environment with Gcore VM init scripts

Provisioning new cloud instances can be repetitive and time-consuming if you’re doing everything manually: installing packages, configuring environments, copying SSH keys, and more. With cloud-init, you can automate these tasks and launch development-ready instances from the start.Gcore Edge Cloud VMs support cloud-init out of the box. With a simple YAML script, you can automatically set up a development-ready instance at boot, whether you’re launching a single machine or spinning up a fleet.In this guide, we’ll walk through how to use cloud-init on Gcore Edge Cloud to:Set a passwordInstall packages and system updatesAdd users and SSH keysMount disks and write filesRegister services or install tooling like Docker or Node.jsLet’s get started.What is cloud-init?cloud-init is a widely used tool for customizing cloud instances during the first boot. It reads user-provided configuration data—usually YAML—and uses it to run commands, install packages, and configure the system. In this article, we will focus on Linux-based virtual machines.How to use cloud-init on GcoreFor Gcore Cloud VMs, cloud-init scripts are added during instance creation using the User data field in the UI or API.Step 1: Create a basic scriptStart with a simple YAML script. Here’s one that updates packages and installs htop:#cloud-config package_update: true packages: - htop Step 2: Launch a new VM with your scriptGo to the Gcore Customer Portal, navigate to VMs, and start creating a new instance (or just click here). When you reach the Additional options section, enable the User data option. Then, paste in your YAML cloud-init script.Once the VM boots, it will automatically run the script. This works the same way for all supported Linux distributions available through Gcore.3 real-world examplesLet’s look at three examples of how you can use this.Example 1: Add a password for a specific userThe below script sets the for the default user of the selected operating system:#cloud-config password: <password> chpasswd: {expire: False} ssh_pwauth: True Example 2: Dev environment with Docker and GitThe following script does the following:Installs Docker and GitAdds a new user devuser with sudo privilegesAuthorizes an SSH keyStarts Docker at boot#cloud-config package_update: true packages: - docker.io - git users: - default - name: devuser sudo: ALL=(ALL) NOPASSWD:ALL groups: docker shell: /bin/bash ssh-authorized-keys: - ssh-rsa AAAAB3Nza...your-key-here runcmd: - systemctl enable docker - systemctl start docker Example 3: Install Node.js and clone a repoThis script installs Node.js and clones a GitHub repo to your Gcore VM at launch:#cloud-config packages: - curl runcmd: - curl -fsSL https://deb.nodesource.com/setup_18.x | bash - - apt-get install -y nodejs - git clone https://github.com/example-user/dev-project.git /home/devuser/project Reusing and versioning your scriptsTo avoid reinventing the wheel, keep your cloud-init scripts:In version control (e.g., Git)Templated for different environments (e.g., dev vs staging)Modular so you can reuse base blocks across projectsYou can also use tools like Ansible or Terraform with cloud-init blocks to standardize provisioning across your team or multiple Gcore VM environments.Debugging cloud-initIf your script doesn’t behave as expected, SSH into the instance and check the cloud-init logs:sudo cat /var/log/cloud-init-output.log This file shows each command as it ran and any errors that occurred.Other helpful logs:/var/log/cloud-init.log /var/lib/cloud/instance/user-data.txt Pro tip: Echo commands or write log files in your script to help debug tricky setups—especially useful if you’re automating multi-node workflows across Gcore Cloud.Tips and best practicesIndentation matters! YAML is picky. Use spaces, not tabs.Always start the file with #cloud-config.runcmd is for commands that run at the end of boot.Use write_files to write configs, env variables, or secrets.Cloud-init scripts only run on the first boot. To re-run, you’ll need to manually trigger cloud-init or re-create the VM.Automate it all with GcoreIf you're provisioning manually, you're doing it wrong. Cloud-init lets you treat your VM setup as code: portable, repeatable, and testable. Whether you’re spinning up ephemeral dev boxes or preparing staging environments, Gcore’s support for cloud-init means you can automate it all.For more on managing virtual machines with Gcore, check out our product documentation.Explore Gcore VM product docs

How to cut egress costs and speed up delivery using Gcore CDN and Object Storage

If you’re serving static assets (images, videos, scripts, downloads) from object storage, you’re probably paying more than you need to, and your users may be waiting longer than they should.In this guide, we explain how to front your bucket with Gcore CDN to cache static assets, cut egress bandwidth costs, and get faster TTFB globally. We’ll walk through setup (public or private buckets), signed URL support, cache control best practices, debugging tips, and automation with the Gcore API or Terraform.Why bother?Serving directly from object storage hits your origin for every request and racks up egress charges. With a CDN in front, cached files are served from edge—faster for users, and cheaper for you.Lower TTFB, better UXWhen content is cached at the edge, it doesn’t have to travel across the planet to get to your user. Gcore CDN caches your assets at PoPs close to end users, so requests don’t hit origin unless necessary. Once cached, assets are delivered in a few milliseconds.Lower billsMost object storage providers charge $80–$120 per TB in egress fees. By fronting your storage with a CDN, you only pay egress once per edge location—then it’s all cache hits after that. If you’re using Gcore Storage and Gcore CDN, there’s zero egress fee between the two.Caching isn’t the only way you save. Gcore CDN can also compress eligible file types (like HTML, CSS, JavaScript, and JSON) on the fly, further shrinking bandwidth usage and speeding up file delivery—all without any changes to your storage setup.Less origin traffic and less data to transfer means smaller bills. And your storage bucket doesn’t get slammed under load during traffic spikes.Simple scaling, globallyThe CDN takes the hit, not your bucket. That means fewer rate-limit issues, smoother traffic spikes, and more reliable performance globally. Gcore CDN spans the globe, so you’re good whether your users are in Tokyo, Toronto, or Tel Aviv.Setup guide: Gcore CDN + Gcore Object StorageLet’s walk through configuring Gcore CDN to cache content from a storage bucket. This works with Gcore Object Storage and other S3-compatible services.Step 1: Prep your bucketPublic? Check files are publicly readable (via ACL or bucket policy).Private? Use Gcore’s AWS Signature V4 support—have your access key, secret, region, and bucket name ready.Gcore Object Storage URL format: https://<bucket-name>.<region>.cloud.gcore.lu/<object> Step 2: Create CDN resource (UI or API)In the Gcore Customer Portal:Go to CDN > Create CDN ResourceChoose "Accelerate and protect static assets"Set a CNAME (e.g. cdn.yoursite.com) if you want to use your domainConfigure origin:Public bucket: Choose None for authPrivate bucket: Choose AWS Signature V4, and enter credentialsChoose HTTPS as the origin protocolGcore will assign a *.gcdn.co domain. If you’re using a custom domain, add a CNAME: cdn.yoursite.com CNAME .gcdn.co Here’s how it works via Terraform: resource "gcore_cdn_resource" "cdn" { cname = "cdn.yoursite.com" origin_group_id = gcore_cdn_origingroup.origin.id origin_protocol = "HTTPS" } resource "gcore_cdn_origingroup" "origin" { name = "my-origin-group" origin { source = "mybucket.eu-west.cloud.gcore.lu" enabled = true } } Step 3: Set caching behaviorSet Cache-Control headers in your object metadata: Cache-Control: public, max-age=2592000 Too messy to handle in storage? Override cache logic in Gcore:Force TTLs by path or extensionIgnore or forward query strings in cache keyStrip cookies (if unnecessary for cache decisions)Pro tip: Use versioned file paths (/img/logo.v3.png) to bust cache safely.Secure access with signed URLsWant your assets to be private, but still edge-cacheable? Use Gcore’s Secure Token feature:Enable Secure Token in CDN settingsSet a secret keyGenerate time-limited tokens in your appPython example: import base64, hashlib, time secret = 'your_secret' path = '/videos/demo.mp4' expires = int(time.time()) + 3600 string = f"{expires}{path} {secret}" token = base64.urlsafe_b64encode(hashlib.md5(string.encode()).digest()).decode().strip('=') url = f"https://cdn.yoursite.com{path}?md5={token}&expires={expires}" Signed URLs are verified at the CDN edge. Invalid or expired? Blocked before origin is touched.Optional: Bind the token to an IP to prevent link sharing.Debug and cache tuneUse curl or browser devtools: curl -I https://cdn.yoursite.com/img/logo.png Look for:Cache: HIT or MISSCache-ControlX-Cached-SinceCache not working? Check for the following errors:Origin doesn’t return Cache-ControlCDN override TTL not appliedCache key includes query strings unintentionallyYou can trigger purges from the Gcore Customer Portal or automate them via the API using POST /cdn/purge. Choose one of three ways:Purge all: Clear the entire domain’s cache at once.Purge by URL: Target a specific full path (e.g., /images/logo.png).Purge by pattern: Target a set of files using a wildcard at the end of the pattern (e.g., /videos/*).Monitor and optimize at scaleAfter rollout:Watch origin bandwidth dropCheck hit ratio (aim for >90%)Audit latency (TTFB on HIT vs MISS)Consider logging using Gcore’s CDN logs uploader to analyze cache behavior, top requested paths, or cache churn rates.For maximum savings, combine Gcore Object Storage with Gcore CDN: egress traffic between them is 100% free. That means you can serve cached assets globally without paying a cent in bandwidth fees.Using external storage? You’ll still slash egress costs by caching at the edge and cutting direct origin traffic—but you’ll unlock the biggest savings when you stay inside the Gcore ecosystem.Save money and boost performance with GcoreStill serving assets direct from storage? You’re probably wasting money and compromising performance on the table. Front your bucket with Gcore CDN. Set smart cache headers or use overrides. Enable signed URLs if you need control. Monitor cache HITs and purge when needed. Automate the setup with Terraform. Done.Next steps:Create your CDN resourceUse private object storage with Signature V4Secure your CDN with signed URLsCreate a free CDN resource now

Bare metal vs. virtual machines: performance, cost, and use case comparison

Choosing the right type of server infrastructure is critical to how your application performs, scales, and fits your budget. For most workloads, the decision comes down to two core options: bare metal servers and virtual machines (VMs). Both can be deployed in the cloud, but they differ significantly in terms of performance, control, scalability, and cost.In this article, we break down the core differences between bare metal and virtual servers, highlight when to choose each, and explain how Gcore can help you deploy the right infrastructure for your needs. If you want to learn about either BM or VMs in detail, we’ve got articles for those: here’s the one for bare metal, and here’s a deep dive into virtual machines.Bare metal vs. virtual machines at a glanceWhen evaluating whether bare metal or virtual machines are right for your company, consider your specific workload requirements, performance priorities, and business objectives. Here’s a quick breakdown to help you decide what works best for you.FactorBare metal serversVirtual machinesPerformanceDedicated resources; ideal for high-performance workloadsShared resources; suitable for moderate or variable workloadsScalabilityOften requires manual scaling; less flexibleHighly elastic; easy to scale up or downCustomizationFull control over hardware, OS, and configurationLimited by hypervisor and provider’s environmentSecurityIsolated by default; no hypervisor layerShared environment with strong isolation protocolsCostHigher upfront cost; dedicated hardwarePay-as-you-go pricing; cost-effective for flexible workloadsBest forHPC, AI/ML, compliance-heavy workloadsStartups, dev/test, fast-scaling applicationsAll about bare metal serversA bare metal server is a single-tenant physical server rented from a cloud provider. Unlike virtual servers, the hardware is not shared with other users, giving you full access to all resources and deeper control over configurations. You get exclusive access and control over the hardware via the cloud provider, which offers the stability and security needed for high-demand applications.The benefits of bare metal serversHere are some of the business advantages of opting for a bare metal server:Maximized performance: Because they are dedicated resources, bare metal servers provide top-tier performance without sharing processing power, memory, or storage with other users. This makes them ideal for resource-intensive applications like high-performance computing (HPC), big data processing, and game hosting.Greater control: Since you have direct access to the hardware, you can customize the server to meet your specific requirements. This is especially important for businesses with complex, specialized needs that require fine-tuned configurations.High security: Bare metal servers offer a higher level of security than their alternatives due to the absence of virtualization. With no shared resources or hypervisor layer, there’s less risk of vulnerabilities that come with multi-tenant environments.Dedicated resources: Because you aren’t sharing the server with other users, all server resources are dedicated to your application so that you consistently get the performance you need.Who should use bare metal servers?Here are examples of instances where bare metal servers are the best option for a business:High-performance computing (HPC)Big data processing and analyticsResource-intensive applications, such as AI/ML workloadsGame and video streaming serversBusinesses requiring enhanced security and complianceAll about virtual machinesA virtual server (or virtual machine) runs on top of a physical server that’s been partitioned by a cloud provider using a hypervisor. This allows multiple VMs to share the same hardware while remaining isolated from each other.Unlike bare metal servers, virtual machines share the underlying hardware with other cloud provider customers. That means you’re using (and paying for) part of one server, providing cost efficiency and flexibility.The benefits of virtual machinesHere are some advantages of using a shared virtual machine:Scalability: Virtual machines are ideal for businesses that need to scale quickly and are starting at a small scale. With cloud-based virtualization, you can adjust your server resources (CPU, memory, storage) on demand to match changing workloads.Cost efficiency: You pay only for the resources you use with VMs, making them cost-effective for companies with fluctuating resource needs, as there is no need to pay for unused capacity.Faster deployment: VMs can be provisioned quickly and easily, which makes them ideal for anyone who wants to deploy new services or applications fast.Who should use virtual machines?VMs are a great fit for the following:Web hosting and application hostingDevelopment and testing environmentsRunning multiple apps with varying demandsStartups and growing businesses requiring scalabilityBusinesses seeking cost-effective, flexible solutionsWhich should you choose?There’s no one-size-fits-all answer. Your choice should depend on the needs of your workload:Choose bare metal if you need dedicated performance, low-latency access to hardware, or tighter control over security and compliance.Choose virtual servers if your priority is flexible scaling, faster deployment, and optimized cost.If your application uses GPU-based inference or AI training, check out our dedicated guide to VM vs. BM for AI workloads.Get started with Gcore BM or VMs todayAt Gcore, we provide both bare metal and virtual machine solutions, offering flexibility, performance, and reliability to meet your business needs. Gcore Bare Metal has the power and reliability needed for demanding workloads, while Gcore Virtual Machines offers customizable configurations, free egress traffic, and flexibility.Compare Gcore BM and VM pricing now

Optimize your workload: a guide to selecting the best virtual machine configuration

Virtual machines (VMs) offer the flexibility, scalability, and cost-efficiency that businesses need to optimize workloads. However, choosing the wrong setup can lead to poor performance, wasted resources, and unnecessary costs.In this guide, we’ll walk you through the essential factors to consider when selecting the best virtual machine configuration for your specific workload needs.﹟1 Understand your workload requirementsThe first step in choosing the right virtual machine configuration is understanding the nature of your workload. Workloads can range from light, everyday tasks to resource-intensive applications. When making your decision, consider the following:Compute-intensive workloads: Applications like video rendering, scientific simulations, and data analysis require a higher number of CPU cores. Opt for VMs with multiple processors or CPUs for smoother performance.Memory-intensive workloads: Databases, big data analytics, and high-performance computing (HPC) jobs often need more RAM. Choose a VM configuration that provides sufficient memory to avoid memory bottlenecks.Storage-intensive workloads: If your workload relies heavily on storage, such as file servers or applications requiring frequent read/write operations, prioritize VM configurations that offer high-speed storage options, such as SSDs or NVMe.I/O-intensive workloads: Applications that require frequent network or disk I/O, such as cloud services and distributed applications, benefit from VMs with high-bandwidth and low-latency network interfaces.﹟2 Consider VM size and scalabilityOnce you understand your workload’s requirements, the next step is to choose the right VM size. VM sizes are typically categorized by the amount of CPU, memory, and storage they offer.Start with a baseline: Select a VM configuration that offers a balanced ratio of CPU, RAM, and storage based on your workload type.Scalability: Choose a VM size that allows you to easily scale up or down as your needs change. Many cloud providers offer auto-scaling capabilities that adjust your VM’s resources based on real-time demand, providing flexibility and cost savings.Overprovisioning vs. underprovisioning: Avoid overprovisioning (allocating excessive resources) unless your workload demands peak capacity at all times, as this can lead to unnecessary costs. Similarly, underprovisioning can affect performance, so finding the right balance is essential.﹟3 Evaluate CPU and memory considerationsThe central processing unit (CPU) and memory (RAM) are the heart of a virtual machine. The configuration of both plays a significant role in performance. Workloads that need high processing power, such as video encoding, machine learning, or simulations, will benefit from VMs with multiple CPU cores. However, be mindful of CPU architecture—look for VMs that offer the latest processors (e.g., Intel Xeon, AMD EPYC) for better performance per core.It’s also important that the VM has enough memory to avoid paging, which occurs when the system uses disk space as virtual memory, significantly slowing down performance. Consider a configuration with more RAM and support for faster memory types like DDR4 for memory-heavy applications.﹟4 Assess storage performance and capacityStorage performance and capacity can significantly impact the performance of your virtual machine, especially for applications requiring large data volumes. Key considerations include:Disk type: For faster read/write operations, opt for solid-state drives (SSDs) over traditional hard disk drives (HDDs). Some cloud providers also offer NVMe storage, which can provide even greater speed for highly demanding workloads.Disk size: Choose the right size based on the amount of data you need to store and process. Over-allocating storage space might seem like a safe bet, but it can also increase costs unnecessarily. You can always resize disks later, so avoid over-allocating them upfront.IOPS and throughput: Some workloads require high input/output operations per second (IOPS). If this is a priority for your workload (e.g., databases), make sure that your VM configuration includes high IOPS storage options.﹟5 Weigh up your network requirementsWhen working with cloud-based VMs, network performance is a critical consideration. High-speed and low-latency networking can make a difference for applications such as online gaming, video conferencing, and real-time analytics.Bandwidth: Check whether the VM configuration offers the necessary bandwidth for your workload. For applications that handle large data transfers, such as cloud backup or file servers, make sure that the network interface provides high throughput.Network latency: Low latency is crucial for applications where real-time performance is key (e.g., trading systems, gaming). Choose VMs with low-latency networking options to minimize delays and improve the user experience.Network isolation and security: Check if your VM configuration provides the necessary network isolation and security features, especially when handling sensitive data or operating in multi-tenant environments.﹟6 Factor in cost considerationsWhile it’s essential that your VM has the right configuration, cost is always an important factor to consider. Cloud providers typically charge based on the resources allocated, so optimizing for cost efficiency can significantly impact your budget.Consider whether a pay-as-you-go or reserved model (which offers discounted rates in exchange for a long-term commitment) fits your usage pattern. The reserved option can provide significant savings if your workload runs continuously. You can also use monitoring tools to track your VM’s performance and resource usage over time. This data will help you make informed decisions about scaling up or down so you’re not paying for unused resources.﹟7 Evaluate security featuresSecurity is a primary concern when selecting a VM configuration, especially for workloads handling sensitive data. Consider the following:Built-in security: Look for VMs that offer integrated security features such as DDoS protection, web application firewall (WAF), and encryption.Compliance: Check that the VM configuration meets industry standards and regulations, such as GDPR, ISO 27001, and PCI DSS.Network security: Evaluate the VM's network isolation capabilities and the availability of cloud firewalls to manage incoming and outgoing traffic.﹟8 Consider geographic locationThe geographic location of your VM can impact latency and compliance. Therefore, it’s a good idea to choose VM locations that are geographically close to your end users to minimize latency and improve performance. In addition, it’s essential to select VM locations that comply with local data sovereignty laws and regulations.﹟9 Assess backup and recovery optionsBackup and recovery are critical for maintaining data integrity and availability. Look for VMs that offer automated backup solutions so that data is regularly saved. You should also evaluate disaster recovery capabilities, including the ability to quickly restore data and applications in case of failure.﹟10 Test and iterateFinally, once you've chosen a VM configuration, testing its performance under real-world conditions is essential. Most cloud providers offer performance monitoring tools that allow you to assess how well your VM is meeting your workload requirements.If you notice any performance bottlenecks, be prepared to adjust the configuration. This could involve increasing CPU cores, adding more memory, or upgrading storage. Regular testing and fine-tuning means that your VM is always optimized.Choosing a virtual machine that suits your requirementsSelecting the best virtual machine configuration is a key step toward optimizing your workloads efficiently, cost-effectively, and without unnecessary performance bottlenecks. By understanding your workload’s needs, considering factors like CPU, memory, storage, and network performance, and continuously monitoring resource usage, you can make informed decisions that lead to better outcomes and savings.Whether you're running a small application or large-scale enterprise software, the right VM configuration can significantly improve performance and cost. Gcore offers a wide range of virtual machine options that can meet your unique requirements. Our virtual machines are designed to meet diverse workload requirements, providing dedicated vCPUs, high-speed storage, and low-latency networking across 30+ global regions. You can scale compute resources on demand, benefit from free egress traffic, and enjoy flexible pricing models by paying only for the resources in use, maximizing the value of your cloud investments.Contact us to discuss your VM needs

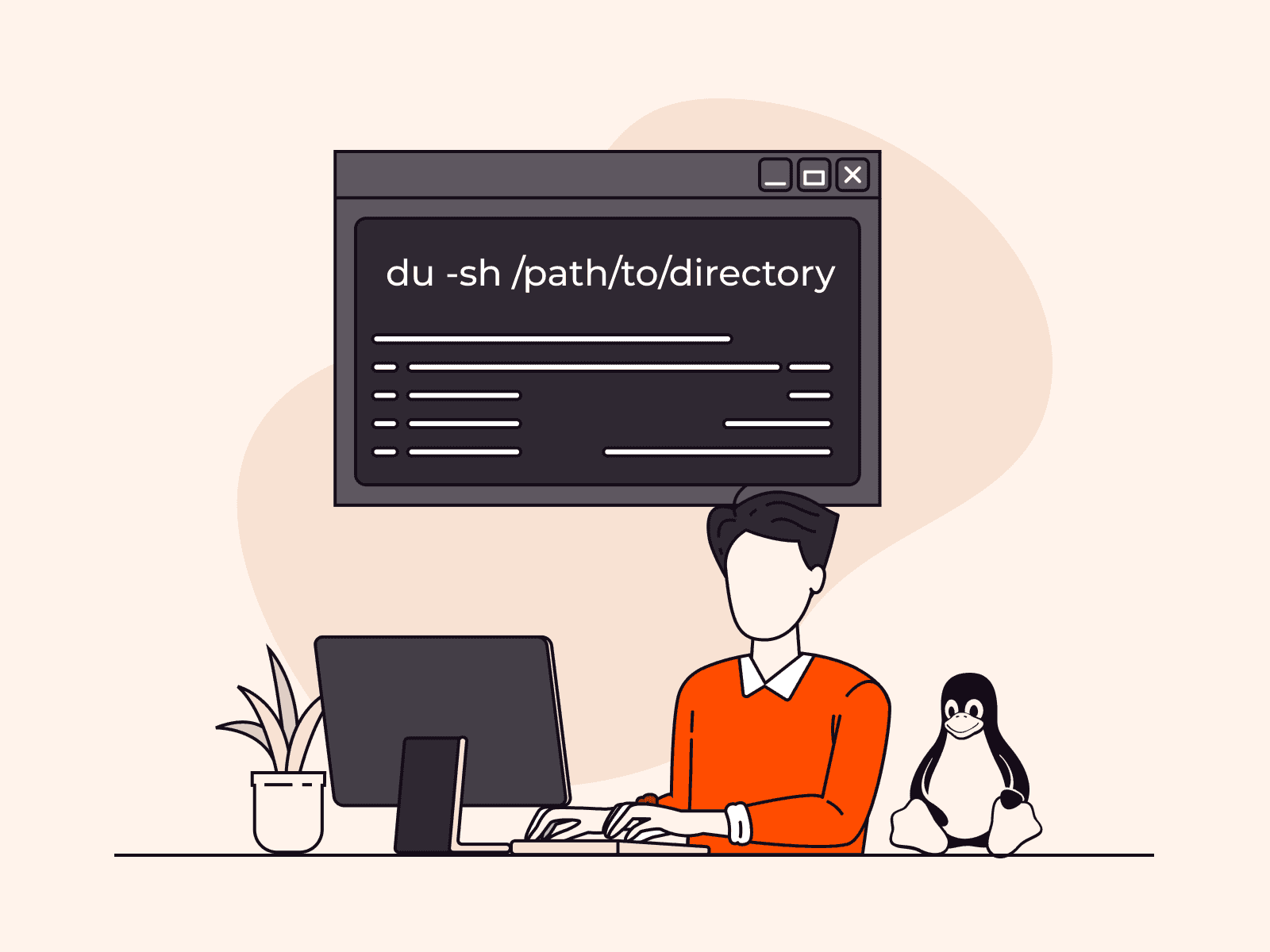

How to get the size of a directory in Linux

Understanding how to check directory size in Linux is critical for managing storage space efficiently. Understanding this process is essential whether you’re assessing specific folder space or preventing storage issues.This comprehensive guide covers commands and tools so you can easily calculate and analyze directory sizes in a Linux environment. We will guide you step-by-step through three methods: du, ncdu, and ls -la. They’re all effective and each offers different benefits.What is a Linux directory?A Linux directory is a special type of file that functions as a container for storing files and subdirectories. It plays a key role in organizing the Linux file system by creating a hierarchical structure. This arrangement simplifies file management, making it easier to locate, access, and organize related files. Directories are fundamental components that help ensure smooth system operations by maintaining order and facilitating seamless file access in Linux environments.#1 Get Linux directory size using the du commandUsing the du command, you can easily determine a directory’s size by displaying the disk space used by files and directories. The output can be customized to be presented in human-readable formats like kilobytes (KB), megabytes (MB), or gigabytes (GB).Check the size of a specific directory in LinuxTo get the size of a specific directory, open your terminal and type the following command:du -sh /path/to/directoryIn this command, replace /path/to/directory with the actual path of the directory you want to assess. The -s flag stands for “summary” and will only display the total size of the specified directory. The -h flag makes the output human-readable, showing sizes in a more understandable format.Example: Here, we used the path /home/ubuntu/, where ubuntu is the name of our username directory. We used the du command to retrieve an output of 32K for this directory, indicating a size of 32 KB.Check the size of all directories in LinuxTo get the size of all files and directories within the current directory, use the following command:sudo du -h /path/to/directoryExample: In this instance, we again used the path /home/ubuntu/, with ubuntu representing our username directory. Using the command du -h, we obtained an output listing all files and directories within that particular path.#2 Get Linux directory size using ncduIf you’re looking for a more interactive and feature-rich approach to exploring directory sizes, consider using the ncdu (NCurses Disk Usage) tool. ncdu provides a visual representation of disk usage and allows you to navigate through directories, view size details, and identify large files with ease.For Debian or Ubuntu, use this command:sudo apt-get install ncduOnce installed, run ncdu followed by the path to the directory you want to analyze:ncdu /path/to/directoryThis will launch the ncdu interface, which shows a breakdown of file and subdirectory sizes. Use the arrow keys to navigate and explore various folders, and press q to exit the tool.Example: Here’s a sample output of using the ncdu command to analyze the home directory. Simply enter the ncdu command and press Enter. The displayed output will look something like this:#3 Get Linux directory size using 1s -1aYou can alternatively opt to use the ls command to list the files and directories within a directory. The options -l and -a modify the default behavior of ls as follows:-l (long listing format)Displays the detailed information for each file and directoryShows file permissions, the number of links, owner, group, file size, the timestamp of the last modification, and the file/directory name-a (all files)Instructs ls to include all files, including hidden files and directoriesIncludes hidden files on Linux that typically have names beginning with a . (dot)ls -la lists all files (including hidden ones) in long format, providing detailed information such as permissions, owner, group, size, and last modification time. This command is especially useful when you want to inspect file attributes or see hidden files and directories.Example: When you enter ls -la command and press Enter, you will see an output similar to this:Each line includes:File type and permissions (e.g., drwxr-xr-x):The first character indicates the file type- for a regular filed for a directoryl for a symbolic linkThe next nine characters are permissions in groups of three (rwx):r = readw = writex = executePermissions are shown for three classes of users: owner, group, and others.Number of links (e.g., 2):For regular files, this usually indicates the number of hard linksFor directories, it often reflects subdirectory links (e.g., the . and .. entries)Owner and group (e.g., user group)File size (e.g., 4096 or 1045 bytes)Modification date and time (e.g., Jan 7 09:34)File name (e.g., .bashrc, notes.txt, Documents):Files or directories that begin with a dot (.) are hidden (e.g., .bashrc)ConclusionThat’s it! You can now determine the size of a directory in Linux. Measuring directory sizes is a crucial skill for efficient storage management. Whether you choose the straightforward du command, use the visual advantages of the ncdu tool, or opt for the versatility of ls -la, this expertise enhances your ability to uphold an organized and efficient Linux environment.Looking to deploy Linux in the cloud? With Gcore Edge Cloud, you can choose from a wide range of pre-configured virtual machines suitable for Linux:Affordable shared compute resources starting from €3.2 per monthDeploy across 50+ cloud regions with dedicated servers for low-latency applicationsSecure apps and data with DDoS protection, WAF, and encryption at no additional costGet started today

How to Run Hugging Face Spaces on Gcore Inference at the Edge

Running machine learning models, especially large-scale models like GPT 3 or BERT, requires a lot of computing power and comes with a lot of latency. This makes real-time applications resource-intensive and challenging to deliver. Running ML models at the edge is a lightweight approach offering significant advantages for latency, privacy, and resource optimization. Gcore Inference at the Edge makes it simple to deploy and manage custom models efficiently, giving you the ability to deploy and scale your favorite Hugging Face models globally in just a few clicks. In this guide, we’ll walk you through how easy it is to harness the power of Gcore’s edge AI infrastructure to deploy a Hugging Face Space model. Whether you’re developing NLP solutions or cutting-edge computer vision applications, deploying at the edge has never been simpler—or more powerful. Step 1: Log In to the Gcore Customer PortalGo to gcore.com and log in to the Gcore Customer Portal. If you don’t yet have an account, go ahead and create one—it’s free. Step 2: Go to Inference at the EdgeIn the Gcore Customer Portal, click Inference at the Edge from the left navigation menu. Then click Deploy custom model. Step 3: Choose a Hugging Face ModelOpen huggingface.com and browse the available models. Select the model you want to deploy. Navigate to the corresponding Hugging Face Space for the model. Click on Files in the Space and locate the Docker option. Copy the Docker image link and startup command from Hugging Face Space. Step 4: Deploy the Model on GcoreReturn to the Gcore Customer Portal deployment page and enter the following details: Model image URL: registry.hf.space/ethux-mistral-pixtral-demo:latest Startup command: python app.py Container port: 7860 Configure the pod as follows: GPU-optimized: 1x L40S vCPUs: 16 RAM: 232GiB For optimal performance, choose any available region for routing placement. Name your deployment and click Deploy.Step 5: Interact with Your ModelOnce the model is up and running, you’ll be provided with an endpoint. You can now interact with the model via this endpoint to test and use your deployed model at the edge.Powerful, Simple AI Deployment with GcoreGcore Inference at the Edge is the future of AI deployment, combining the ease of Hugging Face integration with the robust infrastructure needed for real-time, scalable, and global solutions. By leveraging edge computing, you can optimize model performance and simultaneously futureproof your business in a world that increasingly demands fast, secure, and localized AI applications. Deploying models to the edge allows you to capitalize on real-time insights, improve customer experiences, and outpace your competitors. Whether you’re leading a team of developers or spearheading a new AI initiative, Gcore Inference at the Edge offers the tools you need to innovate at the speed of tomorrow. Explore Gcore Inference at the Edge

Subscribe to our newsletter

Get the latest industry trends, exclusive insights, and Gcore updates delivered straight to your inbox.