Orchestrating AI: Event-Driven Architectures for Complex AI Workflows

- May 23, 2024

- 7 min read

This article was originally published on The New Stack. It’s written by Georgina Tryfou, a machine learning engineer at Gcore and an AI expert with more than 15 years of experience in machine learning and speech recognition.

In the current environment of AI frenzy, the implementation of complex AI workflows is becoming increasingly popular among companies that wish to enhance their offerings with AI abilities. In this article, I’ll share a behind-the-scenes look at how we implement event-driven architecture (EDA) in complex AI workflows at Gcore. I’ll walk you through the initial challenges, the architectural decisions made, and the outcomes of employing an EDA in a dynamic, real-world scenario, showing how EDA enhances system responsiveness, scalability, and flexibility for managing AI-driven tasks like subtitle generation for video content.

Why Event-Driven Architecture Matters for AI

Event-driven architecture (EDA) is a design pattern centered around the production, detection, consumption, and reaction to events rather than static, predefined operations. An event is any significant change in a state or an update that occurs within the system. EDA allows different parts of a system to communicate and operate independently, driven by the occurrence of these events, which can be anything from a user action to a completed process.

The adoption of EDA in AI workflow management marks a significant evolution from traditional architectures, such as monolithic, service-oriented, or polling-based architectures. Its principles of asynchronous communication, decoupling, and dynamic scalability align perfectly with the demands of modern AI applications, with three key benefits:

- The architecture’s modularity makes it easier to scale specific components independently, such as scaling up language processing during high-demand periods in customer service applications without affecting other parts of the system.

- EDA’s modular design simplifies the process of updating or replacing models with newer versions, as seen in health tech environments where predictive algorithms are frequently refined and deployed to keep pace with medical advancements or newer data.

- The flexible nature of EDA allows for the seamless integration of various models to realize a complex AI workflow, such as combining image recognition with predictive maintenance in manufacturing, enhancing system robustness and operational efficiency.

These benefits, observed across different sectors, enhance the scalability and responsiveness of AI systems and also their robustness and adaptability, making EDA indispensable for managing complex, multi-model AI workflows across industries and use cases.

Implementing Event-Driven Architecture in AI: A Practical Case Study

At Gcore, we’ve implemented EDA within Gcore Video Streaming AI features. Today, I’ll share with you how we apply EDA for AI subtitle generation for video.

This project began with the goal of improving the efficiency, latency, scalability, and reliability of subtitle generation in multiple languages from raw video content. The process involves several complex steps:

- Video decompression: The video file is either decompressed or transcoded into a format suitable for processing.

- Speech detection: Segments of the video where speech occurs are identified and distinguished from background noise or silence using specialized ML models.

- Speech-to-text conversion (transcription): The detected speech is converted into text. This step requires inference using complex speech recognition models capable of handling a range of languages, accents, and dialects.

- Text post-processing: Transcription errors, punctuation, and grammar are corrected. The text is formatted to match the video’s timing; for example, it could be broken into timed subtitles.

- Translation (optional): If subtitles are required in multiple languages, the transcribed text may be translated into one or more target languages, again via inference using specialized machine-translation models.

- Subtitle synchronization: Subtitle display is timed to match the speech in the video, ensuring that the subtitles appear on screen precisely when the corresponding speech is heard.

Each of these steps requires specialized AI models or algorithms and may require data processing in real- or near-real time, especially in live-streaming scenarios. The result? Serious complexity.

The complexity arises not only from the technical challenges associated with each task, but also from the need to efficiently manage the flow of data between steps, handle errors or exceptions, and scale resources dynamically based on demand.

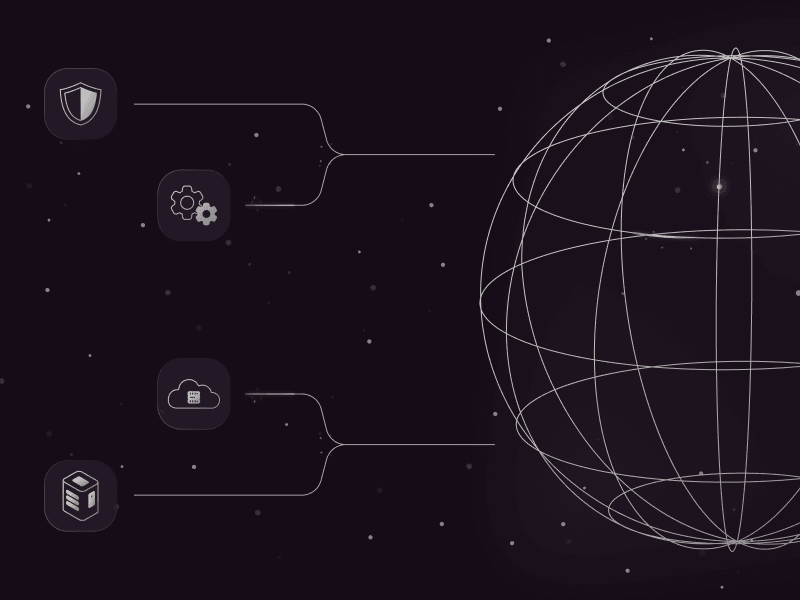

In our pursuit of orchestrating such sophisticated and demanding AI workflows, we designed an AI system that functions with precision and agility through a well-defined EDA. The architecture of this platform, outlined in the figure below, addresses all stages of AI-driven tasks, facilitates communication between components, and checks that each task can be dynamically scaled and autonomously handled.

Four core components underlie the Gcore Streaming AI platform backend. All these components are versatile and essential to a wide range of AI applications.

Django: API Service

At the front of the architecture lies the API service, which uses the robust Django framework. This is the primary interface for user interactions and processes incoming requests for varied services including transcription and content moderation services like nudity detection. This layer validates and parses incoming requests, triggering a cascade of subsequent tasks in the workflow, as represented on the far left of the diagram above where a user initiates a transcription request to the API service.

Celery: Processing Engine and Task Orchestration

Diving deeper into the backend, we leverage Celery, an asynchronous task queue that acts as a robust background processing engine. Celery is tasked with managing AI processes, such as transcribing audio to text or analyzing content for nudity, and other standalone processes, such as synchronizing transcribed content into subtitles. Celery, in combination with Redis which acts as a message broker, orchestrates these tasks and ensures that each task initiation and completion are driven by the occurrence of predefined events.

Celery’s ability to handle AI workflows is enhanced by a suite of advanced features for orchestrating complex workflows: groups, chains, and chords. These tools allow for the decomposition of high-level, complex AI tasks into granular subtasks, handling of their dependencies, and aggregation of their results.

Redis: Broker and Mediator Pattern

Redis plays a crucial role in our system as the broker and mediator, managing the distribution and coordination of tasks across the backend. It utilizes its fast, in-memory data structure store to handle the task queue efficiently. Within our architecture, task signatures and chains act as the mediators controlling the flow and logic of task execution. This mediation is based on event signals indicating task completion.

Redis’ ability to process these signals quickly is vital for maintaining a dynamic and responsive workflow, as shown in the diagram above: tasks are received by the Redis broker, directed to the appropriate processing containers, and their results are collected post-inference for seamless task transitions and data integrity.

AI Celery Workers: Dedicated AI Task Handling

Each AI Celery worker is dedicated to a specific AI task, deploying and managing AI models such as Whisper for transcription and Pyannote for VAD. These workers operate in isolated environments to make sure that each task is processed in a controlled and secure manner, minimizing the risk of interference between tasks. This setup enhances the scalability of our system by allowing each worker to scale independently based on task demands while simultaneously ensuring high reliability and efficiency in AI model execution.

System Requirements Unveiled: Scaling, Reliability, and Latency

The Gcore backend I just described produces three major benefits that are particularly important for AI workflows: scaling, reliability, and latency reduction.

Scaling

The platform scales to handle varying demand by dynamically allocating cloud resources and leveraging GPU acceleration for intensive ML tasks. This results in seamless scaling, avoiding the performance bottlenecks and high costs typical of traditional systems. By adapting computing power in real time, our system efficiently manages workloads during both peak and off-peak times without compromising performance.

Reliability

AI features within Gcore Video Streaming are designed for high reliability with robust fault tolerance and sophisticated error handling. Strategies like data replication and automatic recovery mechanisms promote system continuity even during failures. In video transcription, if a segment of audio is corrupted, our system can either skip that segment or retry processing it, rather than wasting resources on discarding or retrying the whole audio track.

Latency Reduction

System latency for AI elements is reduced by minimizing idle times and enhancing the transition speed between tasks. We employ three key strategies:

- Segmenting large tasks into smaller parts for parallel processing across multiple GPUs

- Optimizing workflows for immediate task transitions

- Smartly scheduling resources to keep computational assets fully engaged

In video transcription, rather than processing the entire video at once, we break it into segments for concurrent processing. This approach shortens transcription times and helps resources be used efficiently, boosting overall system responsiveness.

Concrete Benefits: Our EDA Success Story

Adopting this system revolutionized the management of complex AI workflows within the Gcore Video Streaming backend. Specifically, the EDA enabled us to reduce analysis time, parallelize AI tasks, scale AI workers independently, and ensure system flexibility.

- Reduce analysis time: By utilizing EDA, we dramatically decreased the time required to analyze a single video with a set of pre-trained models. This means faster processing of videos for tasks like subtitle generation and content moderation.

- Parallelize AI tasks: Parallel processing of AI tasks means breaking down complex processes into smaller, manageable tasks that could be executed concurrently. This approach sped up the overall process and optimized the use of computational resources.

- Scale AI workers independently: Understanding the diverse demands of different AI tasks, our architecture scales AI workers based on the specific requirements of each task. For instance, a single request for subtitle generation might trigger one task for Pyannote (for voice activity detection) and potentially 100 tasks for Whisper (for speech-to-text), with only the latter requiring dynamic scaling due to higher demand.

- Ensure system flexibility: We aimed to create a highly flexible system capable of quickly adapting to any new AI request. This required the ability to load models in an ad-hoc manner, ensuring our system could immediately respond to and serve new or evolving AI demands without significant reconfiguration.

We Made These Mistakes So You Don’t Have To

Sharing is caring: Here are three things to keep in mind when setting up your own EDA for AI workflows to get the best results right away.

- Avoid common pitfalls: Design the system with fault tolerance in mind from the outset. Anticipate potential failures in individual components and ensure that the architecture can gracefully handle these incidents without disrupting the overall workflow. Effective error handling and retry mechanisms are essential.

- Choose the correct topology: Implementing a mediator pattern topology can significantly simplify the implementation of business logic and the modularity and reusability of AI models. Initially employing a broker topology, we encountered limitations in managing complex AI tasks due to its linear communication model. To address these challenges and improve our system’s scalability and modularity, we transitioned to a mediator topology. This change introduced a central mediator to manage AI business logic and orchestrate events, allowing components to operate independently and more efficiently. The shift streamlined the development process and significantly enhanced the system’s adaptability and robustness.

- Plan for rapid integration: Flexibility is key in any architecture designed for AI workflows. Allow for the quick addition and integration of new models into end services, essential in this fast-evolving field, where the ability to swiftly adopt and deploy new models can provide a significant competitive advantage.

Future Directions in Event-Driven AI Architectures

We’re always looking to the future and innovating our EDA AI systems at Gcore. Two future directions look particularly promising.

Continuous Learning and Adaptation

Incorporating mechanisms for continuous learning and model adaptation requires periodically updating models with new data and, less obviously, dynamically adjusting workflows and processes based on real-time performance metrics and feedback loops. As AI models continue to grow in complexity and capability, developing robust systems for continuous evaluation and deployment becomes critical. This includes automated performance monitoring, version control, and seamless deployment of updated models without disrupting service.

Embracing LLMs and GAI

Our architecture needs to adapt to AI’s changes. While the rise of LLMs and GAI might suggest that traditional AI inference workflows could become obsolete, the reality is that our proposed architecture supports critical areas of AI deployment, such as continuous model learning and evaluation. Our event-driven system’s flexibility makes it well-suited to integrate LLMs for enhanced decision-making processes and to adapt workflows in response to the capabilities of GAI, where AI models will increasingly be replaced by a single, more powerful one.

Conclusion

We’ve found that adopting an EDA for workflow processing offers significant benefits for scalability, reliability, and efficiency in managing complex AI systems in cloud and streaming environments. This approach addresses critical challenges, including dynamic scaling of large ML models, system robustness, and latency reduction. EDA is already proving itself essential for the evolution of scalable and efficient AI systems.

To experience the end product for yourself, check out Gcore Video Streaming and its impressive AI features, including transcription, translation, content moderation, and object recognition.

Related articles

Subscribe to our newsletter

Get the latest industry trends, exclusive insights, and Gcore updates delivered straight to your inbox.