What Does Blacklisted IP Mean? | Procedure to Remove IP From Blacklist

- By Gcore

- June 6, 2023

- 8 min read

Have you recently noticed a sudden drop in website traffic or email deliverability? One possible reason is that your email IP or domain has been added to a blacklist. But you aren’t a hacker or scammer! So what does blacklisted mean in your case? Why has it happened to you, and what can you do to reverse it?

Blacklists are created as a cybersecurity tool to protect end users from ransomware attacks. According to Statista, the most common cause of ransomware infections is spam/phishing emails. This means that your emails have been flagged as a possible origin of spam or phishing. Unfortunately, blacklists don’t always get it right, and sometimes they flag incorrectly.

Being added to a blacklist doesn’t necessarily require engaging in unacceptable or unethical behavior. Your mail server IP or domain can be blacklisted because someone used your credentials for online fraud or spam. Alternatively, you may have unknowingly behaved in a way that systems mark as suspicious. This can happen to anyone running email campaigns for innocent reasons like overusing spam words or forgetting to update their email authentication (DMARC, SPF, and DKIM).

But don’t worry; you can recover your IP and domain. In this article, we will explain the causes of blacklisting in depth, share how to recover from blacklisting, and help you prevent being blacklisted in the future.

What Does Blacklisted Mean?

Blacklisted IP or domain means your mail server IP or domain is associated with suspicious activity on the web. When you get blacklisted, your website traffic may suddenly drop to zero and your prospects or customers may stop receiving your emails. This obviously has major business repercussions, and is a huge headache.

Your mail server and website are interconnected when it comes to blacklisting. If one gets blacklisted, the other might too. Similarly, if a single mail sender associated with your IP is blacklisted, all other mail servers associated with your domain may also be impacted.

How Does an IP or Domain Get Blacklisted?

Blacklists come in two types: public and private.

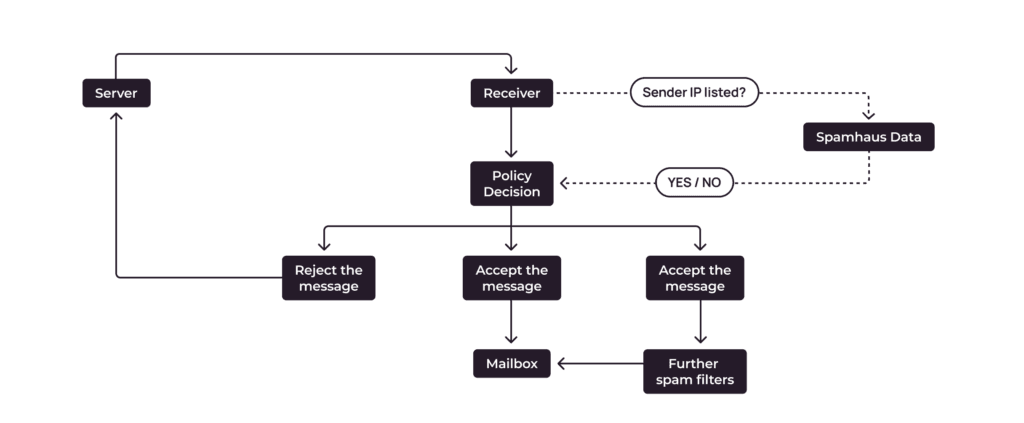

Public blacklists are usually non-commercial, nonprofit projects that provide databases of suspicious IPs or domains to corporations and security vendors. Examples of well-known public blacklists include Spamhaus, Barracuda, Project Honey Pot, and Spamcop. These are the most common types of blacklists.

Private blacklists are generated by a single organization. That organization limits access to its services and recipients. For example, Microsoft and your ISP both maintain blacklists.

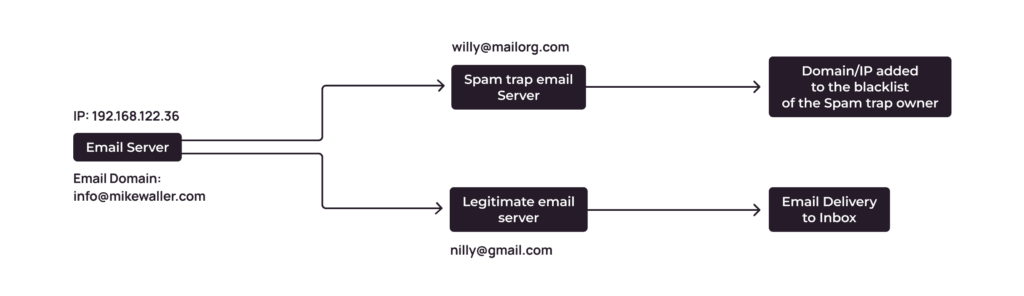

As blacklist operators are most often public entities run by nonprofit organizations, it’s possible for volunteer investigators, subscribers, or email providers to report you. A spam trap is another way that senders with poor email hygiene may inadvertently become blacklisted.

Once blacklisted, all organizations that use this list will reject your emails. Here’s how it works:

Check If You are Blacklisted

Problems with website traffic and email deliverability can arise for many reasons, not only because you are on a blacklist. So first of all, you need to check if you have actually been blacklisted, or if something else is causing your problem.

We at Gcore offer step-by-step instructions on how to check if your email server IP has been blacklisted against 100 public blacklists. If you don’t know your mail server IP, find that out first.

Common Blacklisting Reasons

Each blacklist operator has its own criteria for listing IPs and domains. Usually, they don’t openly publish them in detail, but there are some reasons that many of them employ in their process. Let’s take a look.

Misconfigured Email Server

SPF, DKIM, and DMARC are authentication protocols you should set up to appear credible for recipient email providers and protect yourself from spoofing. Spoofing is when someone illegitimately sends emails on your behalf (e.g., from your mail server IP) to look credible to email vendors, and then sends spam or other fraudulent content.

If you forgot to set up or update SPF, DKIM, and DMARC records when moving to a new email vendor, you are at risk of getting blacklisted.

Website Security Issues

Blacklist operators monitor the internet and list IPs that host malware-infected websites, participate in ransomware, or contain malicious content such as phishing scams. If your website is vulnerable to hackers and fraudsters, your domain may be flagged by blacklist operators. For example, if you don’t have security software to mitigate attacks, haven’t set up two-factor authentication, or haven’t installed SSL certificates, you’re putting yourself at risk.

Using a Shared IP for Your Domain and Email Server

Two kinds of IPs exist: shared and dedicated. Shared IP addresses are used by multiple users or websites simultaneously, while dedicated IPs are assigned exclusively to a single user or website. Shared IPs are typically free, or far cheaper than dedicated IPs.

While it does not always make financial sense to buy dedicated IP, a shared one puts you at risk of blacklisting. When one of the domains from the network is identified as a spammer, you suffer too.

Spam-Like Emailing Behavior

When running a mass email campaign, you should be careful about how and what you send. Sending legitimate and relevant emails can lead to blacklisting if they meet certain criteria. Here some activities that are considered suspicious and may lead to blacklisting:

- A spike in the number of emails sent. If you suddenly extend your email list, the legality of their source is questionable. This is similar to payment systems—a sudden, unprecedented, large financial transfer is always going to arouse suspicion.

- Going over sending limits. Large volumes of emails sent indicate that you may send many unwanted or phishing emails. Many email vendors set a daily limit on emails sent, and exceeding that number can result in blacklisting.

- You have non-existent emails on your list. In this case, email providers also suspect you get the email addresses via “grey” means—such as purchasing email lists—and don’t care if recipients are interested in your message or will all actually receive it. If you try sending to invalid emails twice, your mail server IP may be blacklisted.

- Blacklisted websites in your email body. For mailboxes and blacklist operators, this indicates that you have a connection to the suspicious website.

How to Get Your IP Address Off a Blacklist

Step 1. Understand the Reason You Were Blacklisted

Some blacklist operators require evidence that you have fixed the issue that caused blacklisting before they will take you off their list. If you have been blacklisted on multiple lists, this can damage your mail server IP and domain reputation, and the consequences of each subsequent ban can be more severe. You might face a long delisting procedure (3-4 months) or be blocked permanently.

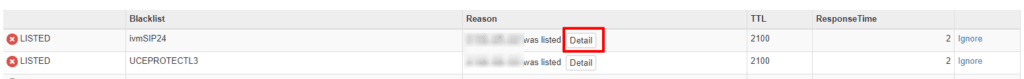

To understand why you were blacklisted, check your mail server IP and domain against several blacklist testers. We suggest starting with MXToolbox, BlacklistAlert, Mail Genius, Mail Tester and IP tracker tool.

The services will identify which blacklists you are listed in and, in some cases, give the most likely reason. Next, visit the aforementioned mentioned blacklists and look up your IP on their website too. Using several tools allows you to get the most accurate information.

Step 2. Fix the Blacklisting Problem

Once you’ve identified potential blacklisting reason(s), try to resolve them with your IT team or hire external experts to remedy the cause(s). There are additional steps you can take to reverse the blacklisting.

- Check if the blacklist operator(s) offer(s) any recommendations to resolve the issue.

- Review whether all senders that email from your domain follow emailing best practices and avoid spamming behaviors.

- See if there were attempts or incidents of hijacking your domain or spoofing your IP. (If you use Gcore DNS to host your domain, you are protected against such incidents by default. Even with a free plan, you’ll receive minimum protection.)

- If you are using a shared IP, contact the support team of your email vendor or hosting provider. Ask them if there are spamming issues with one of the IPs on the network and find out how they can help you with recovery.

Step 3. Request Delisting

Now that you’ve resolved the blacklisting causes and taken internal steps to improve your reputation, you are ready to request delisting from the relevant blacklisting agency.

The procedure for requesting and waiting for delisting depends on whether the blacklist in question is a self-service or automated blacklist.

- Self-service blacklists, e.g. Spamhaus. Go to their website and request delisting manually. Fill out the form to request the removal of your IP or domain—keep in mind to remain polite and provide a detailed explanation of what has happened, what you already did to resolve the problem, and what you are planning to do in order to prevent such cases in the future.

- Automated blacklists, e.g. Uceprotectl1. Follow the same procedure as above, and then wait for 1-2 weeks before they update their system and remove you from the list.

It’s important to know that trusted blacklists won’t ask you to pay for delisting, so beware of scams.

How Long Does It Take to Be Removed from a Blacklist?

Once you’ve requested delisting, it generally takes 1-2 weeks for the request to be actioned if the blacklist has an automatic delisting procedure. Blacklists with self-service removal may be quicker—several hours to days. In some extreme cases—like if you were previously blacklisted, were blacklisted for a severe issue, or have a poor IP or domain reputation—delisting may take up to 3-4 months.

How Can I Prevent My Website from Being Blacklisted?

As you can see, dealing with blacklists is a real headache. It is easier to prevent blacklisting issues upfront rather than experience downtime or even lose clients.

Some effective ways to avoid getting blacklisted include protecting your domain from DNS hijacking, regularly checking your IP and domain registration, having a dedicated IP address for email and domain, and following mass email best practices. Let’s check out each of these in depth.

Protect Your Domain from DNS Hijacking

Your domain might be blacklisted because your website was hacked—for example, through DNS hijacking—and is involved in malicious activities. This is a common problem; in 2022, 28% of 1000 surveyed organizations of different sizes experienced one or more DNS hijacking attacks. Robust security is important for avoiding blacklisting, but also for your reputation and downtime statistics.

Here’s how to get started:

- Use server protection software. In the event of an attack, a system blocks the IP address of the server so an attacker cannot continue with the invasion. Gcore offers free protection and enhanced paid options for its servers.

- Ensure you have strong passwords to access your DNS account.

- Implement two-factor authentication to access your website.

- Install SSL certificates so that data transmitted between the user’s browser and your website is encrypted, keeping sensitive information secure.

- Keep your CMS, plugins, and other web software updated.

- Regularly back up your website’s data in case of a security breach or data loss.

Regularly Check Your IP and Domain Reputation

Monitoring your blacklist situation is a key tactic because it allows you to take immediate action to get unlisted. Fortunately, there are tools that offer blacklist monitoring and instant alerts, including MXToolbox, Barracuda Networks, and Mailgun.

Have a Dedicated IP Address for Email and Domain

A thorough assessment of your specific needs and circumstances is essential before you make the final decision whether to use a dedicated or shared email or domain server. However, some general guidelines can help you make an initial assessment.

For an Email Server

With shared mail server IPs, you are at risk of having reputational and deliverability issues because of other senders. However, a dedicated server is more expensive than a shared option. We recommend going with dedicated mail server IP in the following cases:

- You send a high volume of emails (say, over 10,000 per day.)

- You have the human resources to manage your dedicated IP.

- You can afford a dedicated IP. The price ranges from $2-200 per month.

For a Domain

If one of the domains on shared hosting is compromised, the performance and reputation of others will be damaged too. Some hosting providers (including Gcore) offer protection for shared IPs. If one of the domains experiences attacks, the IP is blocked and hackers can’t damage the reputation of any other domain that uses this IP.

Choose dedicated server hosting if:

- You have the resources to manage your dedicated IP.

- Your website receives high traffic volumes of up to 100,000 visitors.

- You manage resource-intensive applications such as databases, video streaming, and gaming platforms.

- Your website deals with sensitive information and you need the highest level of security.

Follow Mass Email Best Practices

While mass email blacklisting can happen even to the most careful individual in unfortunate and unusual circumstances, some actions that trigger blacklisting are completely avoidable. When it comes to mass emails, blacklisting often happens to individuals who are new to mass emailing or fail to follow best practices. Here’s a checklist to get up to speed before your next campaign:

- Set up or recheck your email authentication (DMARC, DKIM, and SPF) in your DNS account

- Use double opt in when collecting emails to verify them and filter interested users

- Regularly update your email list: watch for hard bounces, remove non-existent emails, and choose email providers that automatically stop sending to hard-bounced email addresses

- Increase your email list gradually

- Offer an unsubscribe option, so recipients are unlikely to flag your emails as spam if they no longer want to receive from you

- Avoid spam-triggering words in your email body

- Don’t overuse links

- Avoid blacklisted websites and link shorteners in your email body

- Segment your email list to send more relevant emails and avoid spam complaints

- Choose trusted email providers

Conclusion

IP or domain blacklisting is a common problem when actively running mass email campaigns. You don’t need to be a spammer to end up on blacklists. Instead, you might unknowingly have spam-like behaviors or have poor website security.

Usually, you will be able to recover your mail server IP or domain within a week or two by following the simple delisting steps in this article. It’s then important to follow web security and email best practices to avoid future blacklisting occurrences.

If you need better security for your website and apps, check out our recent security blog posts:

Related articles

Subscribe to our newsletter

Get the latest industry trends, exclusive insights, and Gcore updates delivered straight to your inbox.