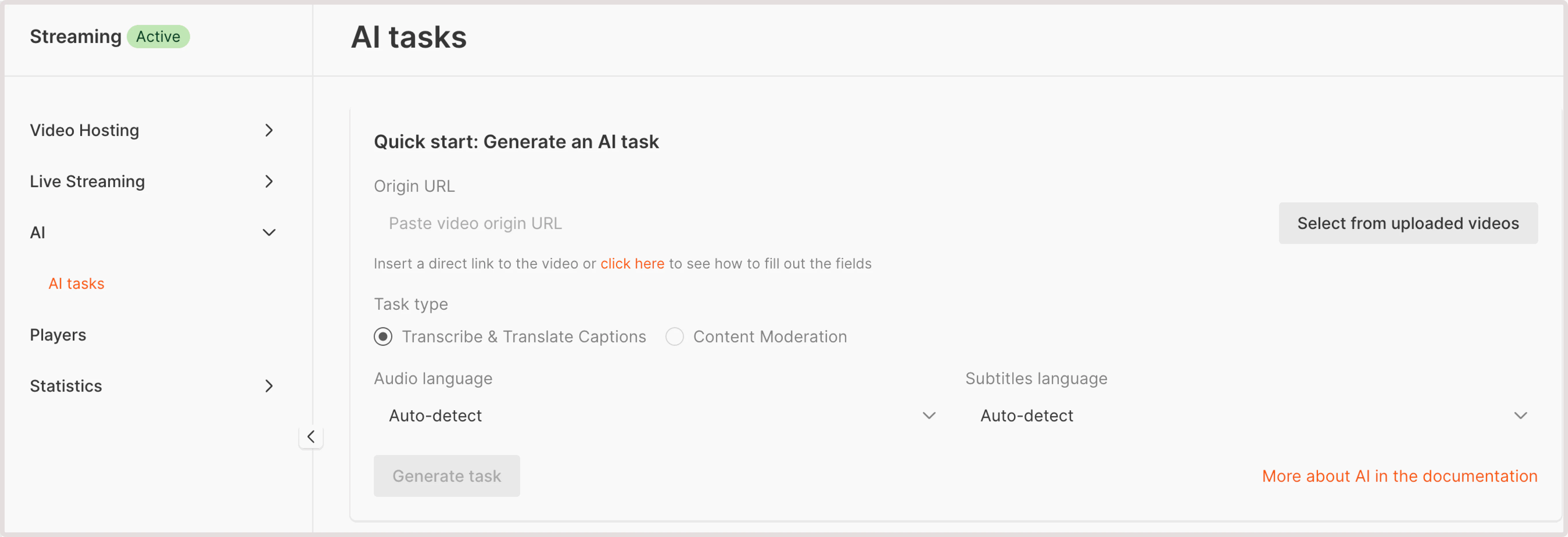

- Paste video origin URL : If your video is stored externally, provide a URL to its location. Ensure that the video is accessible via HTTP or HTTPS protocols.

- Select from uploaded videos : choose a video hosted on the Gcore platform.

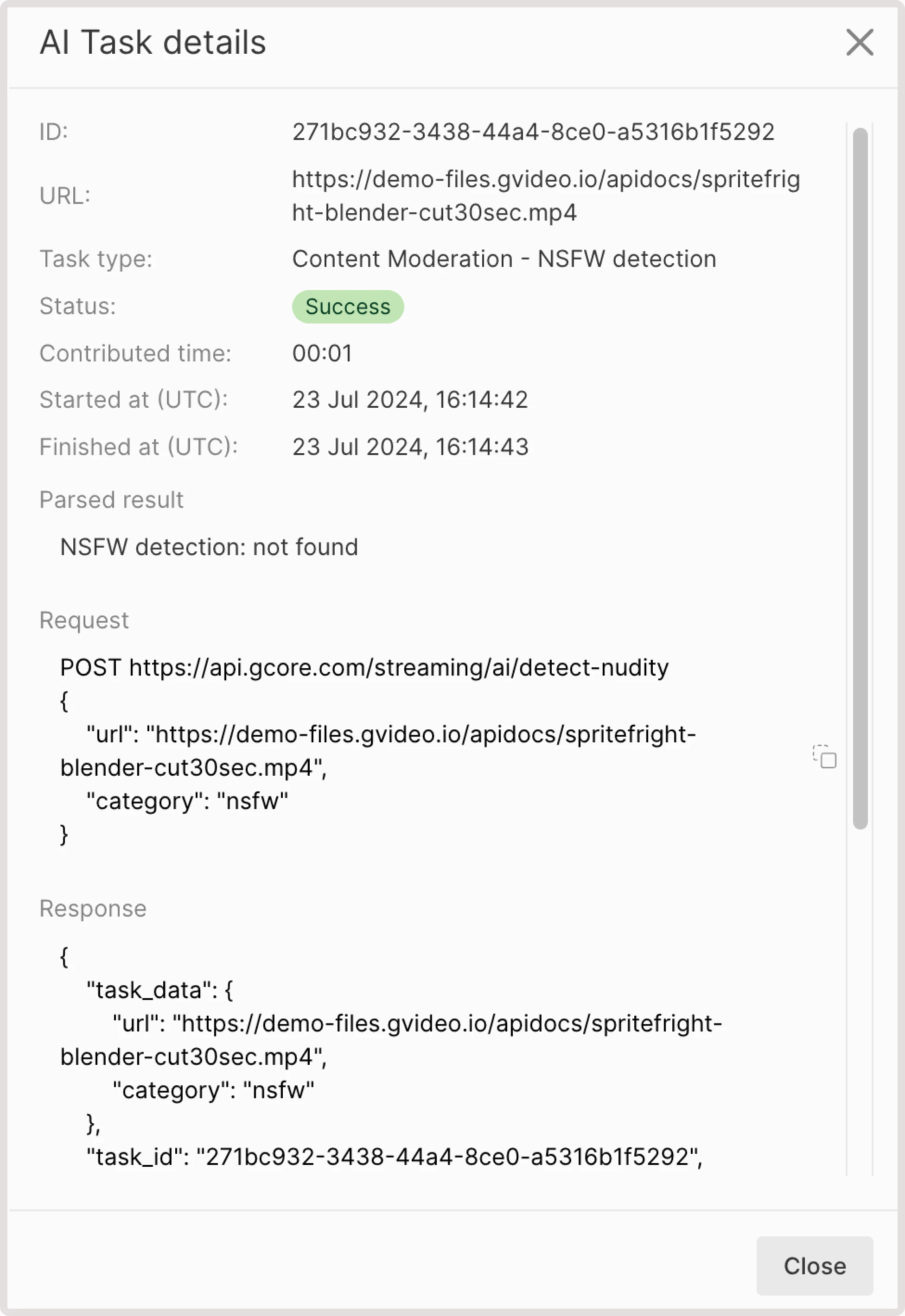

- NSFW detection: not found. This means that your video has no NSFW content.

- If some sensitive content is found, you’ll get the info about the detected element, relevant iFrame, and the confidence level in % of how sure AI is that this content is NSFW. For example, you can get the following output: “nsfw: detected at frame №2 with score 41%”.