Virtual GPU clusters provide GPU computing resources through virtualization, offering flexibility in configuration and resource allocation. Each cluster consists of one or more virtual machines with dedicated GPU access. Virtual GPU is one of the GPU cluster types available in GPU Cloud, alongside Bare Metal and Spot options.Documentation Index

Fetch the complete documentation index at: https://gcore.com/docs/llms.txt

Use this file to discover all available pages before exploring further.

Cluster architecture

Each Virtual GPU cluster consists of one or more virtual machine nodes. All nodes are created from an identical template (image, network settings, disk configuration). After creation, individual nodes can have their disk and network configurations modified independently. For flavors with InfiniBand support, high-speed inter-node networking is configured automatically. This enables efficient distributed training across multiple nodes without manual network configuration. Each node has:- A network boot disk (required). At least one network disk is required as the boot volume for the operating system.

- A local data disk added by default. This non-replicated disk is dedicated to temporary storage.

- Optional network data disks that can be attached during creation or added later. Network disks persist independently of node state.

- Optional file share integration for shared storage across instances.

Create a Virtual GPU cluster

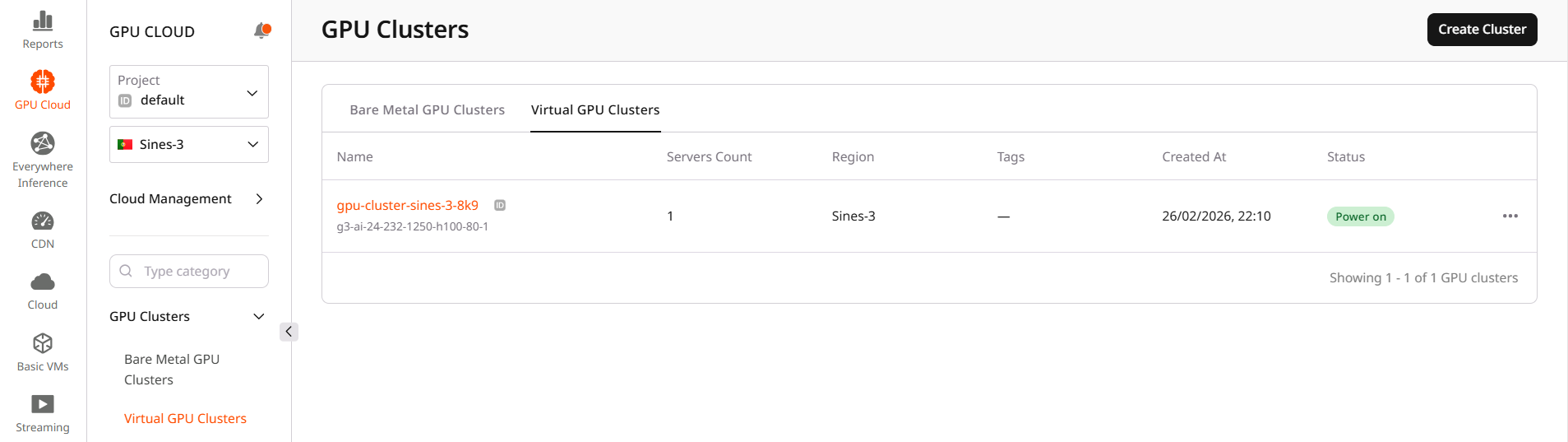

To create a cluster:- Open the Gcore Customer Portal and go to GPU Cloud.

- Select Sines-3 region.

- Navigate to GPU Clusters > Virtual GPU Clusters.

- Click Create Cluster.

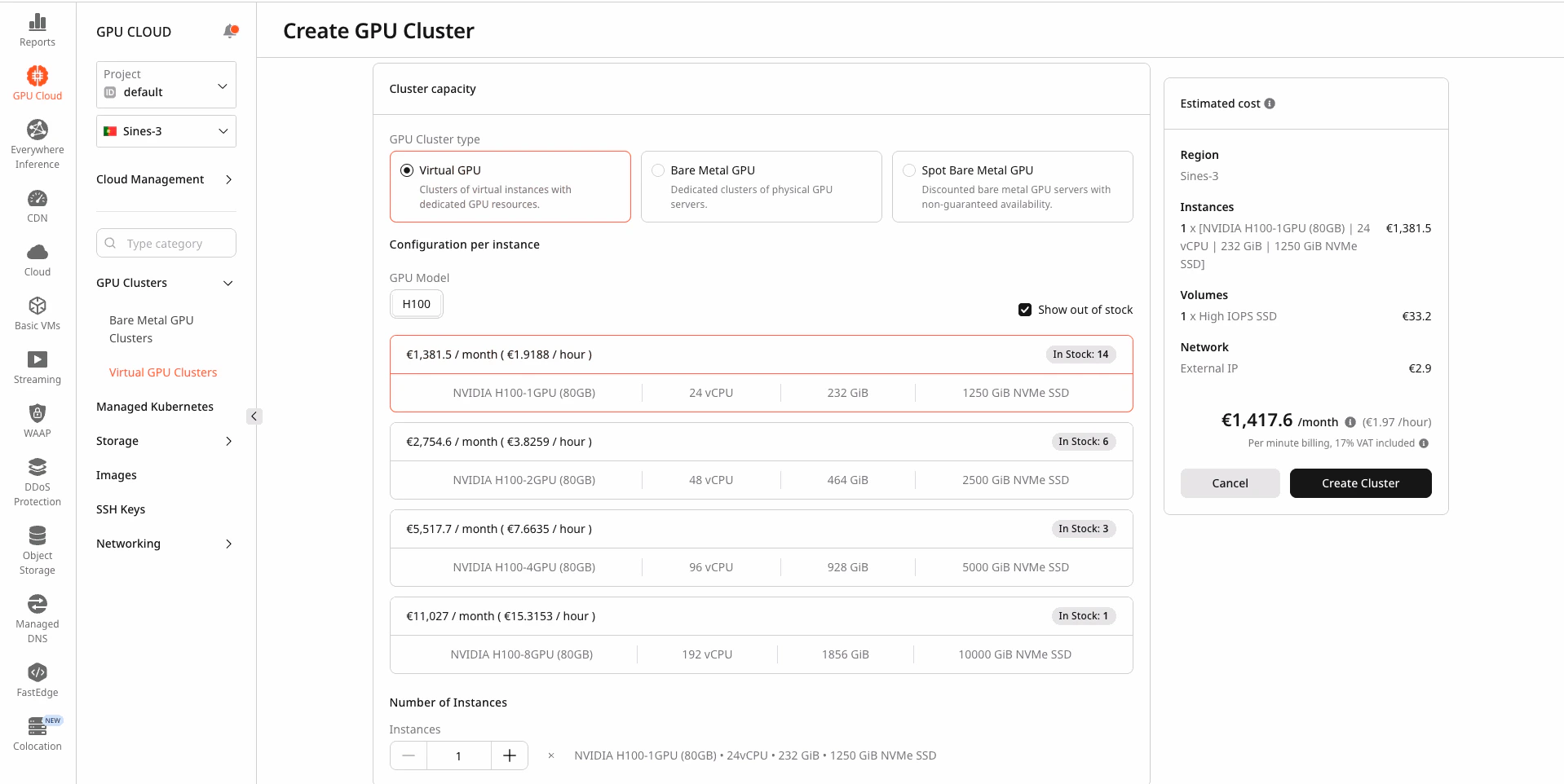

Step 1. Configure cluster capacity

Cluster capacity determines the hardware specifications for each node in the cluster.- Select the GPU Model. Available models depend on the region.

- Enable or disable Show out of stock to filter available flavors.

- Select a flavor. Each flavor card displays GPU configuration, vCPU count, RAM capacity, and pricing.

Step 2. Set the number of instances

In the Number of Instances section, specify how many virtual machines to provision in the cluster. Each instance is a separate virtual machine with the selected flavor configuration. When created, all instances have identical configurations. The minimum is 1 instance, maximum depends on regional availability.Step 3. Select image

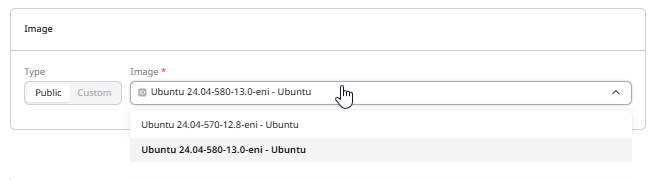

The image defines the operating system and pre-installed software for cluster nodes. In the Image section, choose the operating system:- Public: Pre-configured images with NVIDIA drivers and CUDA toolkit

- Custom: Custom images uploaded to the account

eni suffix are configured for InfiniBand interconnect.

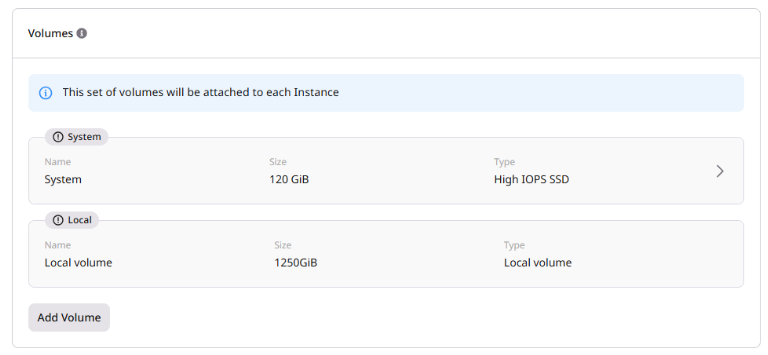

Step 4. Configure volumes

Each Virtual GPU cluster instance has the following storage:- System volume (required): The boot volume for the operating system. This network disk persists across power cycles.

- Local volume: Added automatically to every instance. This non-replicated disk is dedicated to temporary storage only. It cannot be edited, removed, or used as a boot volume. Data on this disk is deleted when the instance is shelved, reconfigured, or deleted.

- Additional network volumes (optional): Additional persistent storage that survives power cycles.

- Name: Enter a name for the volume

- Size: Minimum size depends on the selected image (default: 120 GiB)

- Type: Select from available storage types in the region

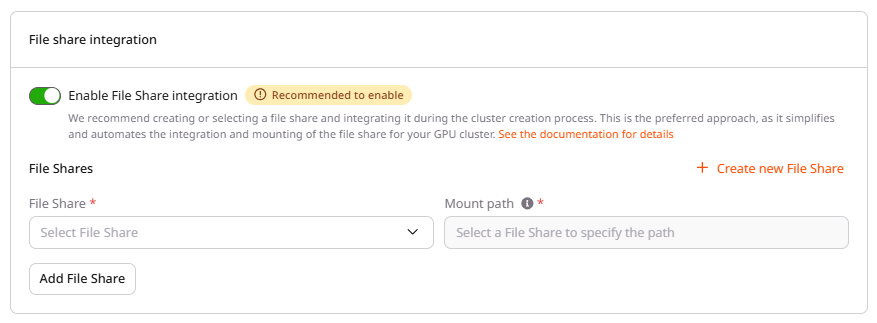

Step 5. Configure file share integration (optional)

The File share integration section enables connecting a shared file system accessible across all instances in the cluster. Enabling file share integration is recommended for workflows that require shared data access, such as distributed training or shared datasets. To enable file share integration:- Enable the File Share integration toggle.

- Select an existing file share or create a new one.

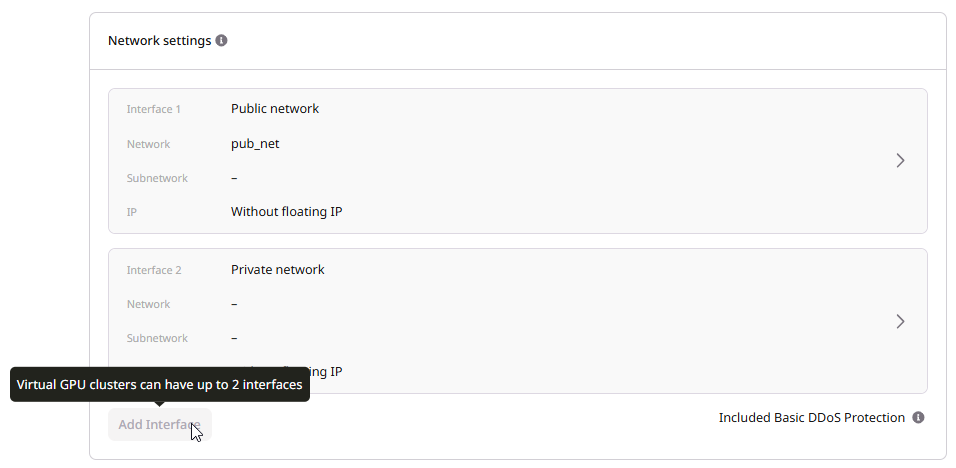

Step 6. Configure network settings

Network settings define how the cluster communicates with external services and other resources based on the network architecture. At least one interface is required.InfoVirtual GPU clusters support a maximum of 3 network interfaces (Public and Private combined) per instance. If file share integration is enabled, the limit is reduced to 2 interfaces, because one interface is reserved for NFS connectivity.InfiniBand interfaces are not counted toward this limit; they are configured automatically based on the selected flavor.

| Type | Access | Use case |

|---|---|---|

| Public | Direct internet access with dynamic public IP | Development, testing, quick access to cluster |

| Private | Internal network only, no external access | Production workloads, security-sensitive environments |

| Dedicated public | Reserved static public IP | Production APIs, services requiring stable endpoints |

Step 7. Configure firewall settings

The Firewall settings section applies firewall rules to control inbound and outbound traffic for cluster instances.- In the Firewall settings section, select one or more firewalls from the dropdown.

- To add more firewalls, click Add Firewall.

Step 8. Configure SSH key

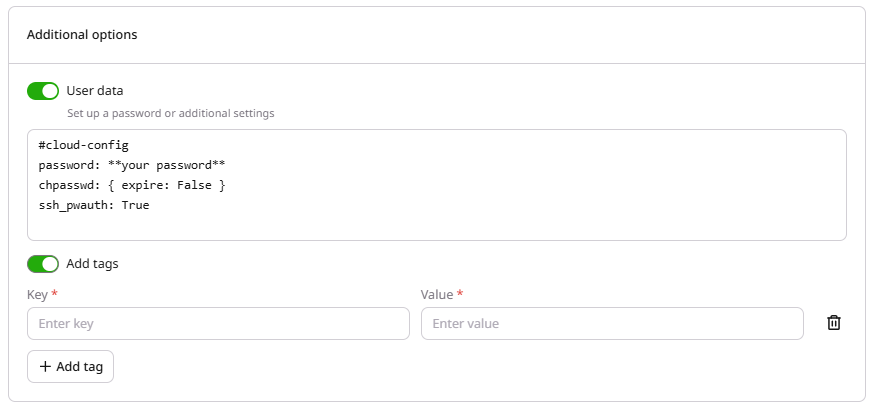

In the SSH key section, select an existing key from the dropdown or create a new one. Keys can be uploaded or generated directly in the portal. If generating a new key pair, save the private key immediately as it cannot be retrieved later.Step 9. Set additional options

The Additional options section provides optional settings: user data scripts for automated configuration and metadata tags for resource organization.

Step 10. Name and create the cluster

The final step assigns a name to the cluster and initiates provisioning.- In the GPU Cluster Name section, enter a name or use the auto-generated one.

- Review the Estimated cost panel on the right.

- Click Create Cluster.

Connect to the cluster

After the cluster is created, connect to a node via SSH and verify that GPUs are available.-

Open a terminal and connect using the default username

ubuntu:Replace<node-ip-address>with the public or floating IP shown in the cluster details. -

Verify that GPUs are detected:

A successful output shows the list of available GPUs, driver version, and CUDA version. If the command fails or no GPUs appear, the image may be missing the correct drivers.