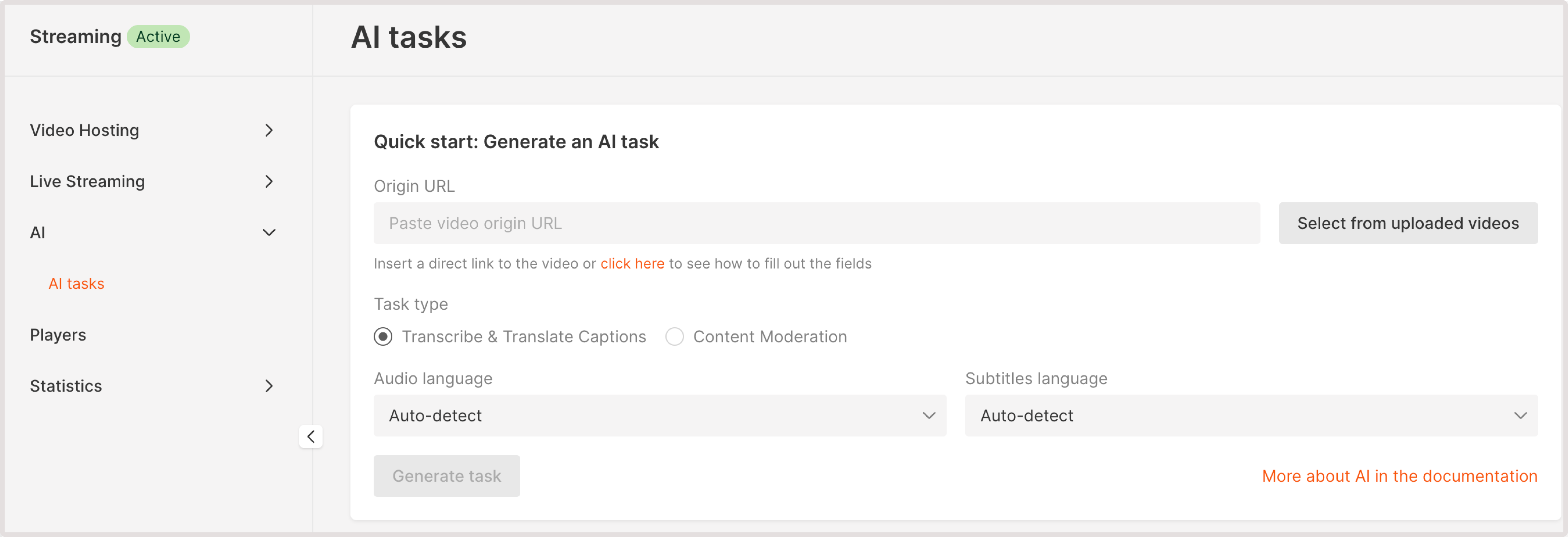

The child pornography content moderation task detects child sexual abuse materials (CSAM). To identify this content, we first run the soft nudity and hard nudity detection tasks. If both methods indicate the presence of obscene content with the involvement of children (child’s face) in a frame, then such a video is marked as obscene. Frames are designated by the age category of identified children. The check returns information with the number of the video frame in which the child’s face was found and the child’s age. Objects with a probability of at least 30% are included in the response. To run the child pornography detection check: 1. In the Gcore Customer Portal, navigate to Streaming > AI. The AI tasks page will open.Documentation Index

Fetch the complete documentation index at: https://gcore.com/docs/llms.txt

Use this file to discover all available pages before exploring further.

- Paste video origin URL : If your video is stored externally, provide a URL to its location. Ensure that the video is accessible via HTTP or HTTPS protocols.

- Select from uploaded videos : choose a video hosted on the Gcore platform.

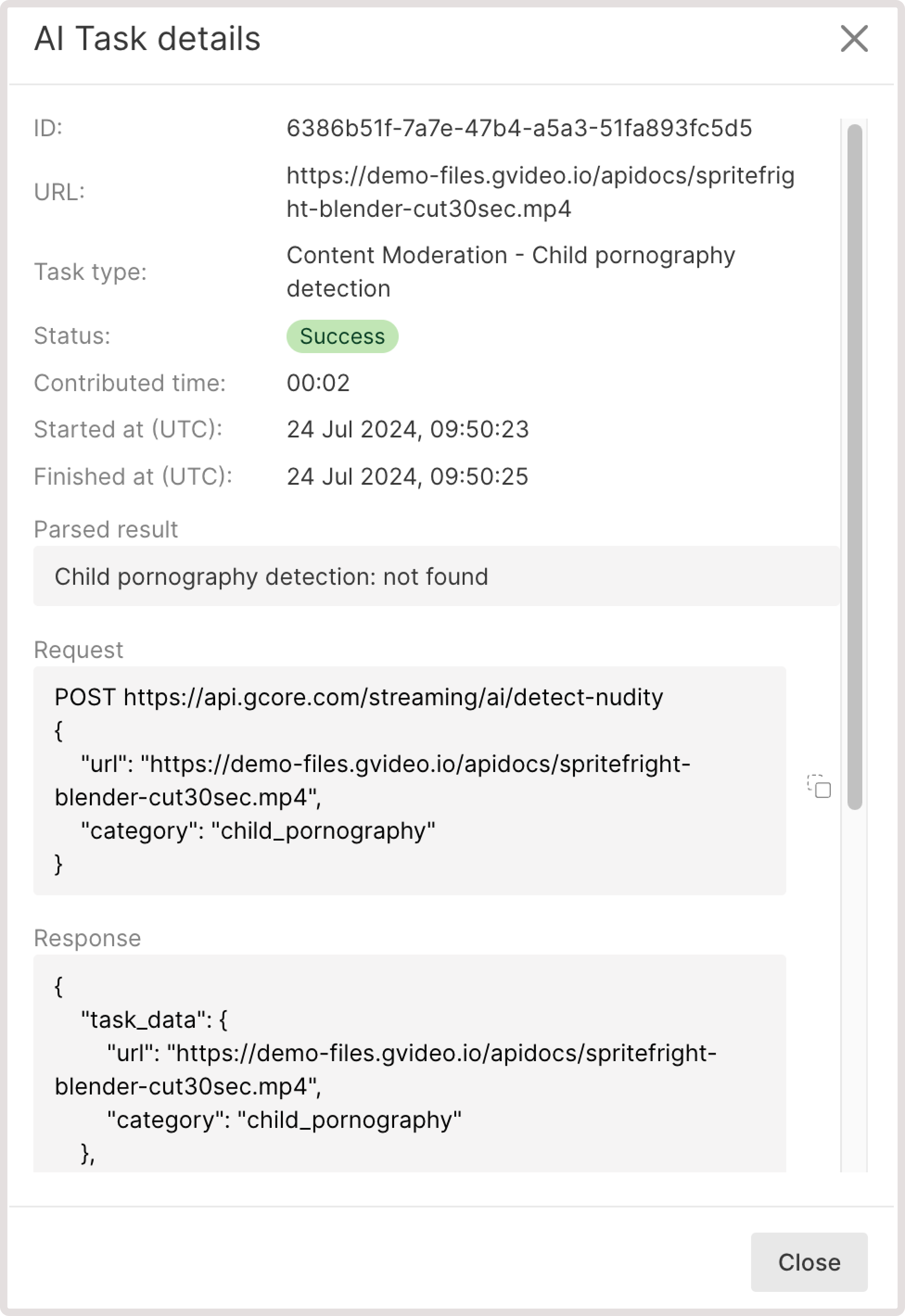

- Child pornography detection: not found. This means that your video has no child sexual abuse materials.

- If some sensitive content is found, you’ll get the info about the detected element, relevant iFrame, and the confidence level in % of how sure AI is that this content contains child sexual abuse materials.