Every cache miss is a trip your server didn't need to make. And those trips add up fast. Sites with poorly optimized caching can see cache hit ratios as low as 70%, meaning three in 10 requests are hitting your origin server directly, dragging up latency, burning bandwidth, and inflating infrastructure costs. Flip that around, and a well-tuned setup routinely achieves 95% to 99% CHR, serving 39 out of every 41 requests straight from cache, as illustrated by Cloudflare's example figures.

If your pages feel slower than they should, your server bills keep climbing, or Gcore CDN doesn't seem to be pulling its weight, your cache hit ratio is the first number you need to look at. It's one of the most actionable performance metrics you have. And most teams aren't watching it closely enough.

You'll learn exactly how CHR is calculated, what a good ratio actually looks like for your content type, why it matters for both speed and costs, and the practical steps you can take to push your numbers higher, including how CDNs and cache configuration choices make or break your results.

What is cache hit ratio?

Cache hit ratio (CHR) is the percentage of requests your cache serves directly, without fetching data from the origin server. Calculate it with a simple formula: divide cache hits by total requests (hits plus misses), then multiply by 100. If your cache handles 850 hits out of 1,000 requests, your CHR is 85%. A higher ratio means faster responses, lower origin server load, and reduced bandwidth costs.

Think of it as a measure of how hard your cache is actually working. When a user requests content and the cache has it ready, that's a hit. When the cache doesn't have it and has to go back to the origin, that's a miss, and every miss adds latency and cost. For static content like images, scripts, and stylesheets, a well-configured cache should hit 95% or above.

In simple terms: Cache hit ratio tells you what percentage of user requests your cache answered on its own, without bothering the origin server.

How is cache hit ratio calculated?

Cache hit ratio has a simple formula that works across any caching system.

Divide your cache hits by total requests (hits plus misses), then multiply by 100:

CHR = (Cache Hits ÷ (Cache Hits + Cache Misses)) × 100

Here's what that looks like with real numbers. Say your cache logs 39 hits and two misses over a given period, that's 95.1% CHR. Or 7,000 hits out of 10,000 total requests gives you 70%. The math doesn't change whether you're measuring a [CDN edge node, an in-memory database cache, or application-level caching.

The inverse is your cache miss ratio. If your CHR is 70%, your miss ratio is 30%. Every point you add to your CHR is a point you subtract from origin server load, and from your latency.

Most CDN dashboards calculate this automatically, so you don't need to run the numbers manually. What matters is knowing what the metric means when you see it, and recognizing when it's trending in the wrong direction.

What is a good cache hit ratio?

A good cache hit ratio is above 80%, though what counts as "good" depends on your content type. For static assets (images, CSS, JavaScript) you should be targeting 95% to 99%. Dynamic content naturally scores lower because it changes too frequently to cache effectively for long.

Here's a practical way to think about it: 850 hits out of 1,000 requests gives you 85% CHR, which is healthy for a mixed-content site. Drop below 80% and you're pushing too many requests back to your origin server, which means higher latency and more infrastructure strain. The 5% to 20% of requests that miss the cache are the expensive ones.

If your site is mostly static, anything below 90% is a signal worth investigating. You're likely misconfiguring TTL values using too many cache key variations, or caching less than you could be.

In simple terms: A good cache hit ratio means your cache is doing most of the work, for most sites, that's at least 80% of requests served from cache, with static-heavy sites aiming closer to 95% or higher.

Why does cache hit ratio matter for performance and costs?

Every cache miss costs you. Latency goes up, your origin server works harder, and your bill grows. When a request misses the cache, the origin has to fetch and serve that content itself. At scale, that adds up fast.

Here's a concrete example. At 70% CHR on a site handling 10,000 requests, 3,000 of those hit your origin server. That's 3,000 opportunities for slower response times and higher compute costs. Push that to 95% CHR and you've cut origin traffic by more than two-thirds.

There's also a compounding effect on latency worth understanding. Cache hits return content from edge nodes close to your users, often in single-digit milliseconds. Cache misses need to travel further — either to a mid-tier cache or all the way back to the origin — adding anywhere from 10 ms to several hundred milliseconds depending on your CDN architecture and how far away the origin is geographically.

The cost side is pretty straightforward: origin servers cost more to run under heavy load, and bandwidth from origin is more expensive than serving from cache.

How does a CDN affect cache hit ratio?

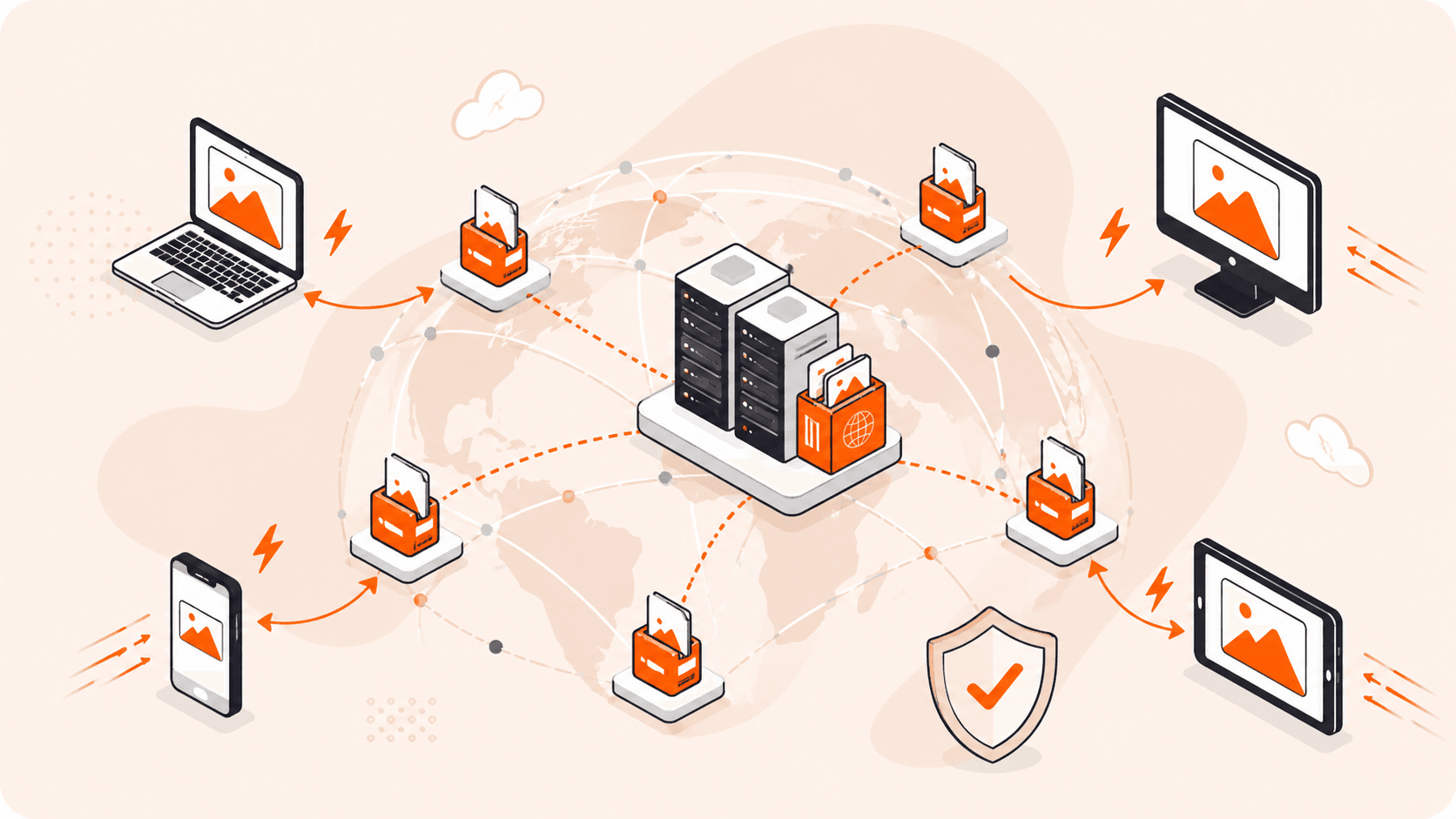

A CDN boosts your cache hit ratio by storing copies of your content across dozens, or even hundreds, of geographically distributed servers. Instead of every request traveling back to a single origin, users get served from the nearest Point of Presence (PoP). That proximity is what drives CHR up.

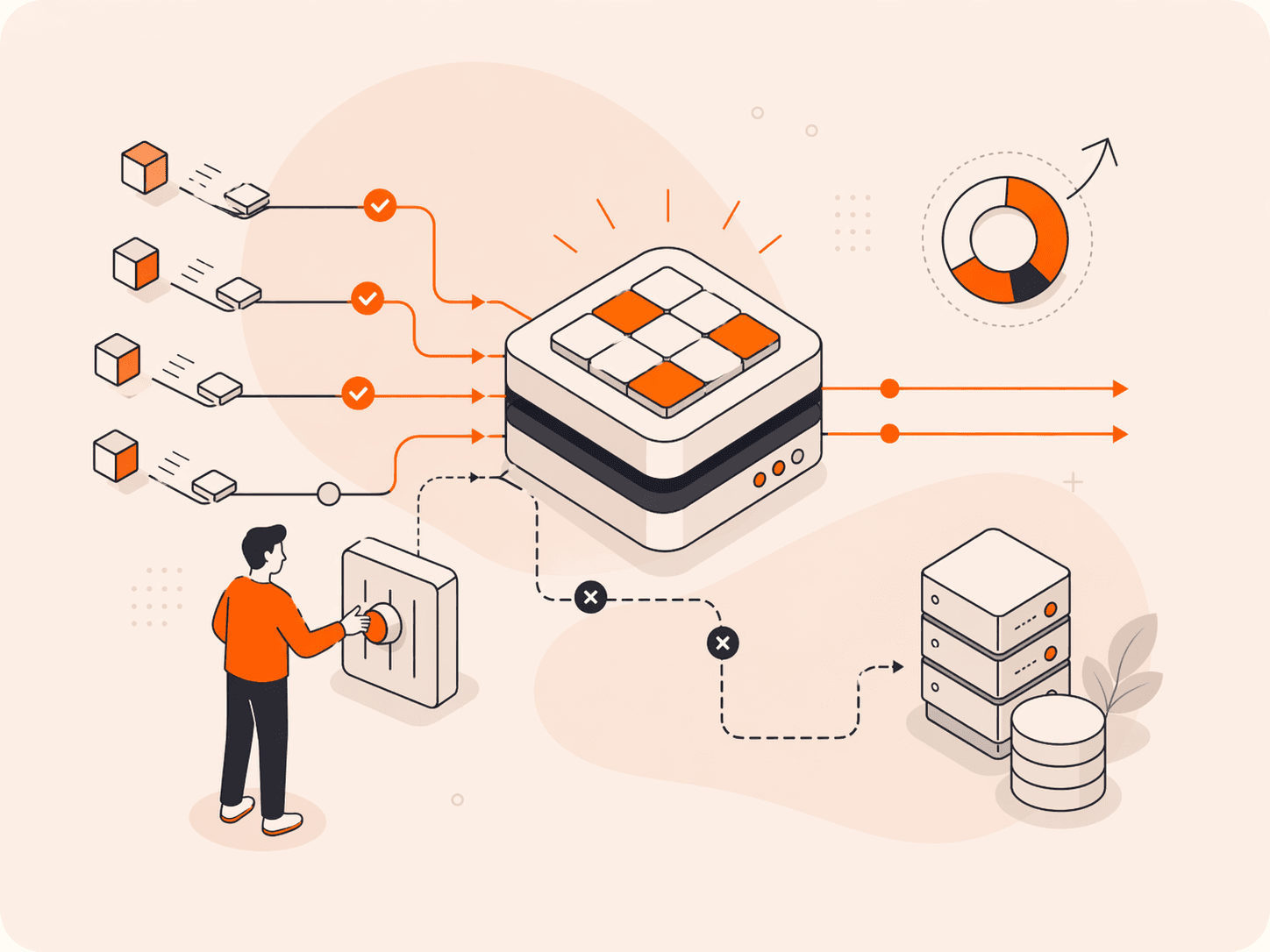

Here's how it works: when a user requests a file, the CDN checks its edge node first. If the content is cached there, it's a hit. If not, the request may pass through an intermediate caching layer (such as a shield or regional cache) before reaching the origin. The edge node then caches the content locally and serves it. Every subsequent request for that same file from nearby users becomes a hit. The cache warms up over time, and your CHR climbs.

CDNs don't just cache in one place, though. A static asset requested in Frankfurt gets cached at that edge node, and subsequent requests from nearby users are served from that local cache. Over time, assets get cached across regional clusters as users in each region request them. Your global CHR improves because each regional cluster builds its own cache, reducing misses for local users.

TTL configuration matters here too. A CDN can only hold content as long as its TTL allows. Set TTLs too short and the cache expires before it gets enough reuse. Set them appropriately for static assets (images, scripts, stylesheets) and a well-configured CDN can push your CHR above 95%.

In simple terms: A CDN spreads cached copies of your content around the world, so more users get served from a nearby server instead of your origin, and that's exactly what pushes your cache hit ratio up.

How to improve cache hit ratio?

Improving your cache hit ratio comes down to tuning how your cache stores, identifies, and retains content. TTL settings, cache key design, and content type segmentation all directly affect how often requests hit cached data instead of your origin.

- Set appropriate TTLs for each content type. Static assets like images, fonts, and stylesheets rarely change, so cache them aggressively with TTLs of 30 days or longer. Dynamic content needs shorter TTLs, but don't default everything to zero. Even a 60-second TTL on semi-static content can meaningfully reduce origin load during traffic spikes.

- Simplify your cache keys. Every variation in a cache key creates a separate cache entry. If your cache key includes unnecessary query parameters, user-agent strings, or cookies, you'll fragment your cache into thousands of unique entries that rarely get reused. Strip out any parameters that don't actually affect the response content.

- Audit your Vary headers. A `Vary: User-Agent` header tells the cache to store a separate copy for every browser type. That fragments your cache badly. Use `Vary` only when the response genuinely differs, for example, `Vary: Accept-Encoding` for compressed versus uncompressed responses.

- Separate static and dynamic content. Don't apply the same caching rules to everything. Route static and dynamic content through different policies: static assets through aggressive caching, dynamic and personalized responses handled differently. Segmenting by content type lets you push your static CHR above 95% without compromising dynamic accuracy.

- Warm your cache proactively. Don't wait for organic traffic to build your cache. Pre-populate it by crawling your most-requested URLs after a deployment or cache purge. This avoids a wave of cache misses right when traffic peaks.

- Monitor CHR by content type, not just overall. An overall CHR of 75% might look acceptable, but it could be masking a 40% CHR on your most-requested assets. Most CDN dashboards let you segment by URL pattern or file type. Use that data to identify exactly where misses are happening.

The key thing to remember: a single configuration change rarely moves the needle on its own. Combine TTL tuning, clean cache keys, and proactive monitoring, and you'll see your CHR climb steadily toward that 95% to 99% target.

What are the common cache hit ratio problems and how to diagnose them?

Cache hit ratio problems tend to fall into a handful of recognizable patterns. Here's what to watch for.

- Aggressive cache purging: Purging too frequently clears valid cached content before it can be reused. Every purge triggers a wave of misses, forcing your origin to rebuild the cache from scratch, often right when traffic is highest.

- Short or missing TTLs: When TTL values are too low, cached content expires before most users even request it. You end up with a cache that technically works but rarely serves content, pushing most requests back to the origin.

- Query string fragmentation: Unique query strings, session IDs, tracking parameters, timestamps, create distinct cache entries that never get reused. A URL with 500 unique query strings generates 500 cache misses instead of one hit.

- Excessive Vary header values: `Vary: User-Agent` or `Vary: Cookie` tells the cache to store separate copies for every header combination. In practice, this multiplies cache entries dramatically and collapses your CHR.

- Uncacheable content types: Dynamic responses, API endpoints returning personalized data, and authenticated pages often bypass the cache entirely. If these make up a large share of your traffic, your overall CHR will look deceptively low even when static caching is healthy.

- Cold cache after deployment: Every full deployment or cache purge resets your CHR to near zero. Without cache warming, the first wave of real users all hit the origin, which is often when traffic peaks.

- Origin errors causing cache bypass: When your origin returns 5xx errors, most caches don't store the response. High origin error rates mean those requests never populate the cache, creating a feedback loop of misses and increased origin load.

- Inconsistent cache-control headers: Conflicting directives, `no-store` on assets that should be cached, or `private` on content that's actually public, prevent caching silently. You won't see an obvious error. You'll just see a CHR that won't climb.

To diagnose these, check the Gcore CDN dashboard and segment CHR by content type and URL pattern. A healthy overall CHR can mask a 40% CHR on your most-requested assets. Look for URLs with high miss counts, inspect response headers for cache-control directives, and trace whether misses cluster around specific file types or traffic events like deployments.

How can Gcore help you improve cache hit ratio?

Gcore's CDN improves your cache hit ratio through edge caching across 210+ points of presence, putting content closer to users and reducing how often requests hit your origin server. Smart cache key configuration, flexible TTL controls, and granular cache-control settings give you the tools to push static content CHR above 95%.

When query string fragmentation or inconsistent headers drag your ratio down, you can normalize cache keys and strip unnecessary parameters directly in the Gcore CDN configuration. No code changes needed. The CDN dashboard segments CHR by content type and URL pattern, so you can spot exactly where misses are concentrated instead of chasing a single aggregate number.

Explore our global CDN to see how it fits your setup.

Frequently asked questions

What is the difference between cache hit ratio and cache hit rate?

They mean the same thing. Both terms describe the percentage of requests served from cache versus total requests received. You'll see them used interchangeably across CDN dashboards and caching documentation.

What is the difference between cache hit ratio and cache miss ratio?

Cache hit ratio and cache miss ratio are two sides of the same coin. If your hit ratio is 85%, your miss ratio is 15%, and they always add up to 100%. The miss ratio tells you how often requests fall through to the origin server, so a 20% miss ratio on a static-heavy site signals a real tuning opportunity.

Can a high cache hit ratio reduce bandwidth costs?

Yes, a high cache hit ratio directly cuts bandwidth costs. Every cache hit is a request your origin server doesn't have to handle, which means less data transferred between origin and edge. At 95% CHR, you're paying origin bandwidth costs on only 5% of your traffic.

What factors affect cache hit ratio accuracy?

Content type, TTL settings, and cache key configuration are the biggest factors. Static assets like images and CSS can hit 95% to 99% CHR, while personalized content scores lower because it can't be cached as broadly.

How does cache hit ratio differ between static and dynamic content?

Static assets like images, CSS, and JavaScript regularly hit 95% to 99% CHR because they rarely change and cache well across all users. Dynamic content is a different story. Personalized pages, API responses, and real-time data often drop below 50% since each request may be unique.

What is a typical cache hit ratio for a CDN?

For a CDN serving mixed content, a CHR above 80% is generally healthy. Well-optimized sites with mostly static assets typically land between 95% and 99%. If you're seeing below 70%, audit your TTL settings and cache key configuration.

How does cache hit ratio apply to database and application caching?

In database and application caching, CHR works the same way: it measures how often your app retrieves data from fast in-memory storage (like Redis) instead of hitting the database. A 90% CHR means nine out of 10 queries skip the database entirely, cutting response times and reducing load on your backend.

Related articles

Subscribe to our newsletter

Get the latest industry trends, exclusive insights, and Gcore updates delivered straight to your inbox.