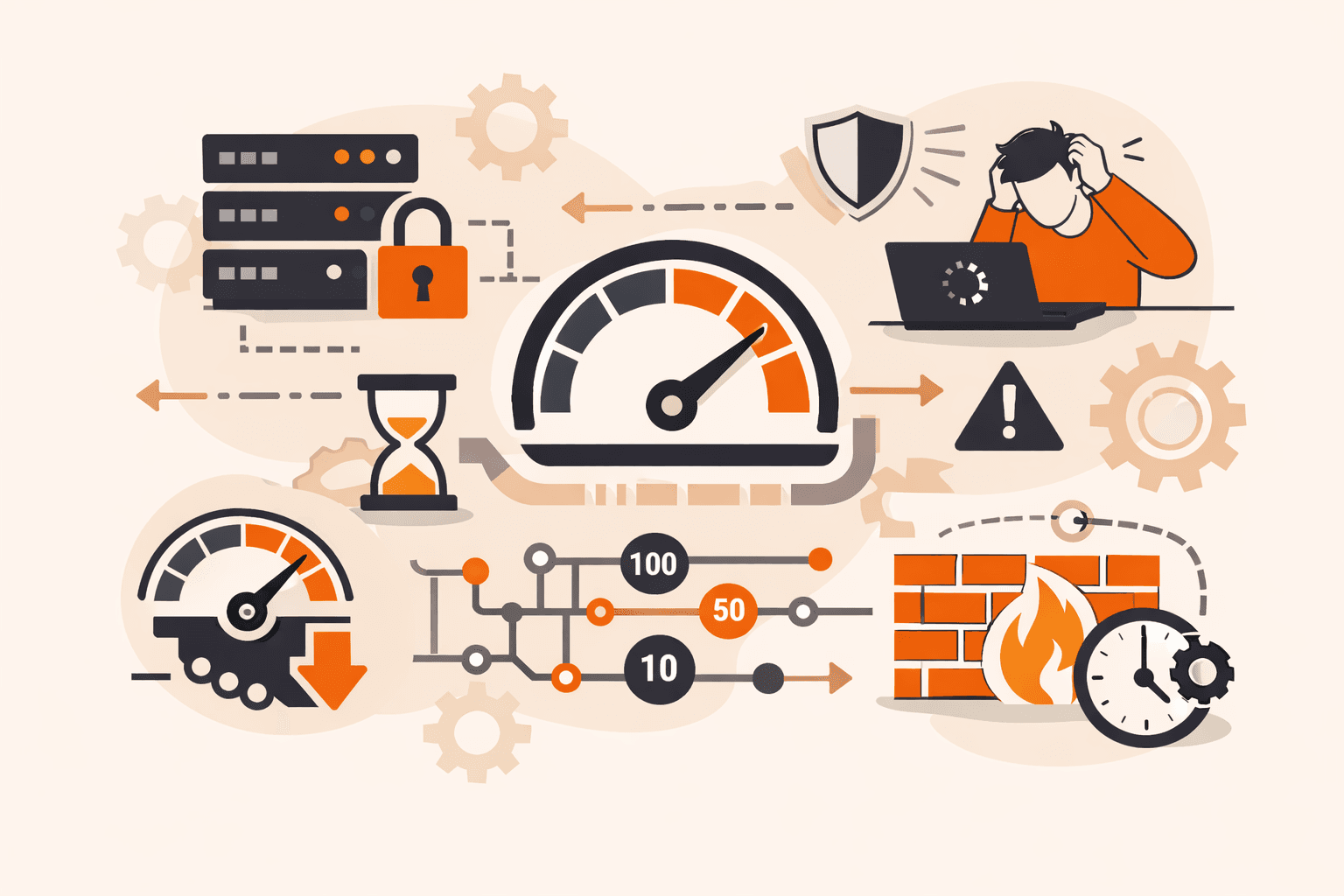

Your login page just got hammered with 10,000 password attempts in under a minute. Your API servers are buckling under a flood of requests. Legitimate users can't access the service while infrastructure costs spike. Without proper controls, a single malicious actor (or even an overzealous legitimate user) can bring your entire system to its knees.

The numbers tell a stark story: servers typically handle around 100 requests per second during peak times, but attacks can blast thousands more than that threshold. Even well-meaning applications can accidentally overwhelm your endpoints, degrading performance for everyone. The result? Downtime, security breaches, and users abandoning the platform for competitors.

You'll discover how to control incoming request traffic, protect infrastructure from overload and DDoS attacks, and ensure fair resource distribution across all your users. You'll learn the proven algorithms that power this protection, from token buckets to advanced AI-driven solutions, and how to implement the right approach for your specific needs.

What is rate limiting?

Rate limiting controls how many requests a user, application, or IP address can make to a server, API, or network within a specific time window. It protects systems from overload by capping incoming traffic at sustainable levels. For example, you might allow 100 requests per minute per user before blocking or queuing additional requests. This prevents performance degradation during traffic spikes and stops malicious actors from overwhelming infrastructure with excessive requests. Rate limiting also ensures fair resource distribution across all users, so no single client can monopolize server capacity at the expense of others.

How does rate limiting work?

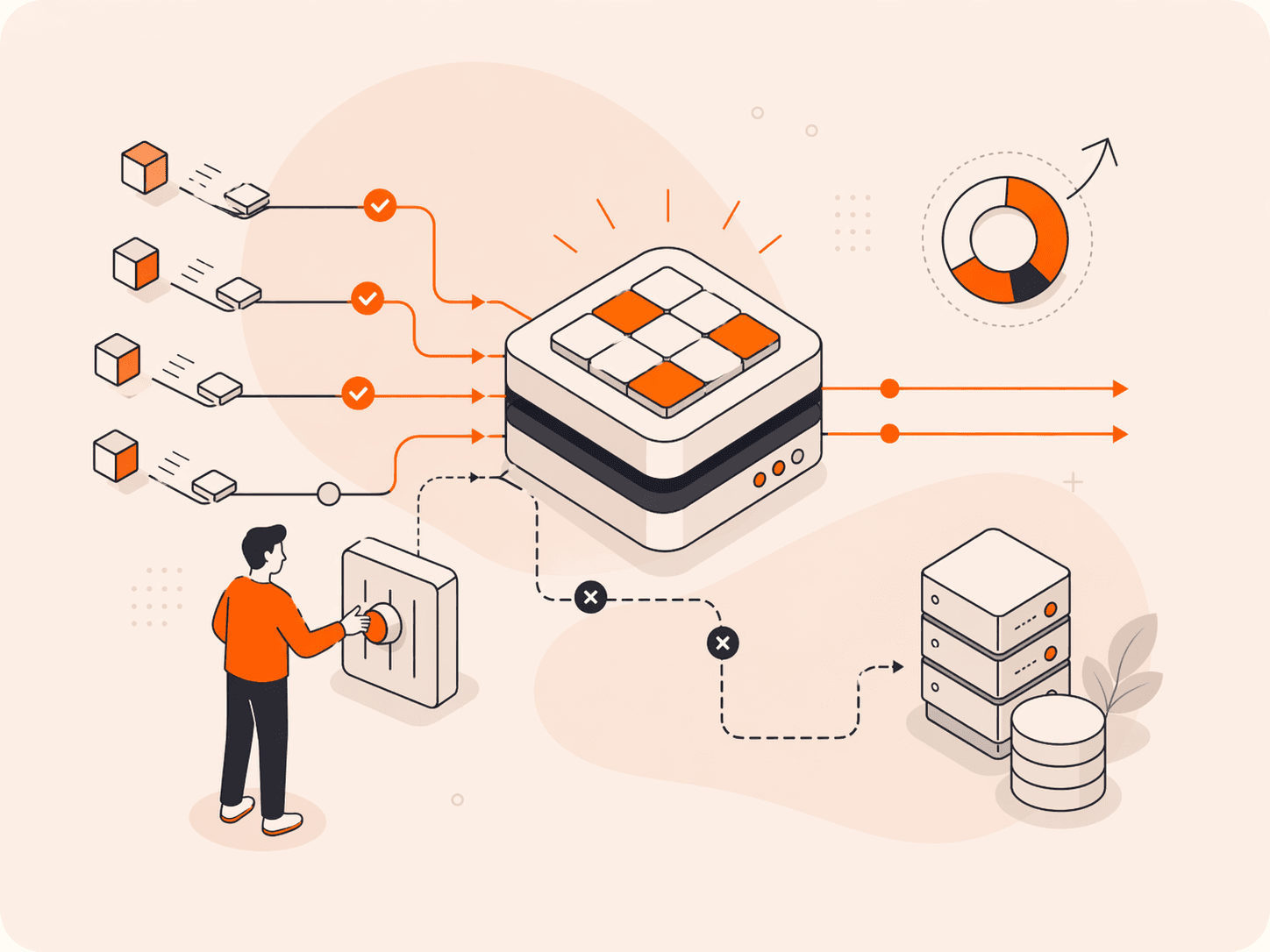

Rate limiting monitors incoming requests and enforces predefined thresholds that control how many operations a client can perform within a specific time window. When a request arrives, the system checks whether the client has exceeded their allowed quota. If they're within limits, the request proceeds. If they've hit the threshold, the system rejects the request and typically returns an HTTP 429 status code.

The enforcement happens through algorithms that track request counts. The token bucket algorithm adds tokens to a user's bucket at a fixed rate (let's say one token per second). Each request consumes a token. When the bucket's empty, new requests get blocked until tokens refill. This approach allows short bursts while maintaining overall control.

The leaky bucket algorithm takes a different approach. It processes requests at a constant rate from a fixed-length queue, smoothing out traffic spikes. Requests that arrive when the queue's full get dropped immediately. This prevents sudden bursts but can delay legitimate requests during high-traffic periods.

Most systems apply these limits at multiple levels. You might see per-user limits (100 requests per minute), per-IP restrictions, or endpoint-specific caps. Login endpoints often block after three or four failed attempts to prevent brute-force attacks. API providers frequently set different thresholds based on service tiers, giving premium users higher request allowances than free-tier customers.

What are the main types of rate limiting?

Rate limiting types define how systems control and measure incoming request traffic. The main types are listed below.

- User-based limiting: This restricts requests per individual user or account, typically tracked through API keys or authentication tokens. A service might allow 1,000 requests per hour per user, blocking additional attempts until the window resets. It's the most common approach for SaaS platforms and public APIs.

- IP-based limiting: Systems track requests from specific IP addresses and enforce caps to prevent abuse from single sources. This method blocks DDoS attacks by identifying and throttling malicious IPs sending excessive traffic. The downside? Shared IPs like corporate networks or VPNs can trigger false positives.

- Server-based limiting: Each server enforces its own request threshold, commonly set at 100 requests per second during peak loads. When one server hits capacity, load balancers redirect traffic to available servers. Music streaming services implement this to maintain responsiveness across their infrastructure.

- Geography-based limiting: Requests route to the nearest regional servers first, but switch to distant servers after hitting time thresholds. This balances global resource utilization while keeping latency low for most users. It's particularly effective for distributed systems with worldwide presence.

- Endpoint-based limiting: Different API endpoints get unique rate limits based on resource intensity. A data export endpoint might allow 10 requests per minute, while a simple status check permits 1,000. This protects critical resources without restricting lightweight operations.

- Concurrent request limiting: Systems cap the number of simultaneous active requests rather than total requests over time. If you're processing five requests and hit the limit, the sixth waits in queue. This prevents resource exhaustion from long-running operations.

- Network-level limiting: Traffic gets controlled at the infrastructure layer before reaching application servers. Firewalls and edge devices enforce these limits, blocking malicious traffic early. It's your first line of defense against volumetric attacks.

What are the benefits of implementing rate limiting?

Rate limiting delivers measurable improvements in system performance, security, and cost management. The benefits are listed below.

- DDoS protection: Rate limiting blocks excessive requests from single sources before they overwhelm infrastructure. When attackers attempt to flood servers with traffic, the system automatically rejects requests beyond the threshold, keeping services available for legitimate users.

- Resource optimization: By controlling request rates, you prevent any single user or service from monopolizing server capacity. This ensures fair distribution across all users and maintains consistent response times even during traffic spikes.

- Cost control: Rate limiting reduces infrastructure expenses by preventing unnecessary scaling and bandwidth consumption. You'll avoid over-provisioning servers to handle abnormal traffic patterns, and you can set usage caps that align with your SaaS pricing tiers.

- API stability: Limiting requests per endpoint protects critical services from overload while maintaining availability. For example, login APIs typically block after three or four failed attempts, preventing brute-force attacks while keeping the service responsive for legitimate authentication requests.

- Load balancing: When one server reaches its rate limit, the system can forward excess requests to other available servers. This prevents bottlenecks and distributes workload effectively across infrastructure without manual intervention.

- Performance consistency: Rate limiting maintains predictable response times by preventing request bursts that cause latency spikes. Your application delivers steady performance because the system processes requests at a sustainable pace rather than overwhelming resources during peaks.

- Security layer: Beyond DDoS defense, rate limiting detects and blocks suspicious patterns like credential stuffing or data scraping attempts. It acts as an early warning system that identifies malicious behavior before it escalates into a full-scale attack.

What are the most common rate limiting algorithms?

Rate limiting algorithms are the specific methods that track, measure, and enforce request quotas over time. Each algorithm balances different priorities like burst handling, memory efficiency, and fairness. The most common algorithms are listed below.

- Token bucket: Tokens are added to a bucket at a fixed rate, and each request consumes one token. If the bucket is empty, the request gets rejected until new tokens arrive. This algorithm allows controlled bursts when tokens accumulate during idle periods, making it flexible for real-world traffic patterns.

- Leaky bucket: Requests enter a fixed-size queue and are processed at a constant rate, similar to water dripping from a bucket with a hole. This smooths out traffic spikes by enforcing steady output. The downside is that requests can get stuck indefinitely if the queue fills up, causing starvation for late arrivals.

- Fixed window: The system counts requests within fixed time intervals, like 100 requests per minute starting at the top of each minute. It's simple to implement but vulnerable to burst attacks at window boundaries. Users can send 100 requests at 12:00:59 and another 100 at 12:01:00, doubling the intended rate.

- Sliding window log: Every request timestamp gets stored, and the system counts requests within a rolling time window. This provides accurate rate limiting without boundary vulnerabilities. The tradeoff is higher memory usage since you're storing individual timestamps for each request.

- Sliding window counter: This combines fixed windows with weighted averages to estimate request counts in rolling periods. It's more memory-efficient than logging every timestamp while avoiding the boundary issues of fixed windows. The calculation uses the current window's count plus a weighted portion of the previous window.

How do you implement rate limiting best practices?

You implement rate limiting best practices by identifying your system's capacity constraints and choosing algorithms that match your traffic patterns and protection goals.

- Measure your baseline capacity first. Run load tests to determine how many requests per second your servers can handle without degrading performance. Track metrics like response time, CPU usage, and memory consumption under different loads. This gives you the data to set realistic limits. If your API starts slowing down at 500 requests per second, set your limit around 400 to maintain headroom.

- Choose the right algorithm for your use case. Token bucket works well when you need to allow occasional bursts while maintaining average rate control. Leaky bucket is better when you need strict, predictable traffic flow. For APIs serving different customer tiers, consider implementing multiple token buckets with different refill rates. Premium users get 1,000 tokens per minute while free tier users get 100.

- Apply different limits to different endpoints. Not all requests consume equal resources. A simple health check endpoint can handle thousands of requests per second, but a complex search query might need stricter limits. Set resource-based limits that reflect actual server load. Maybe 500 requests per second for reads but only 50 for writes.

- Implement geography-based routing when you operate globally. Direct requests to the nearest server first, but set time thresholds that trigger forwarding to distant servers if local capacity fills up. This balances low latency for users with effective resource utilization across infrastructure.

- Build in progressive enforcement rather than hard cutoffs. When users approach their limits, return warning headers showing remaining quota before blocking requests entirely. After three or four failed login attempts, add exponential backoff delays instead of immediate blocks. This stops brute-force attacks while reducing friction for legitimate users who mistyped passwords.

- Monitor and adjust limits based on real traffic patterns. Track which clients hit limits most often and whether those limits actually prevent overload or just frustrate users. If you're blocking 20% of requests during peak hours, your limits might be too aggressive. Set up alerts when rejection rates exceed 5% so you can investigate whether you need more capacity or better rate distribution.

The key thing is treating rate limiting as an ongoing optimization process, not a one-time configuration. Your traffic patterns will change as the service grows.

What are the main challenges with rate limiting?

Rate limiting challenges arise from the technical complexity of enforcing policies across distributed systems while maintaining performance and fairness. Implementation difficulties can lead to service disruptions, user frustration, or security gaps if not properly addressed.

- Distributed state synchronization: When rate limits span multiple servers or data centers, keeping count data consistent becomes complex. A user might exceed their quota by hitting different servers before the system updates globally, or conversely, legitimate requests might get blocked due to synchronization delays between nodes.

- Request classification accuracy: Distinguishing between legitimate traffic spikes and malicious attacks isn't always straightforward. A sudden surge could be a DDoS attack or just users responding to a marketing campaign. Misclassification leads to either blocking real customers or failing to stop threats.

- Queue starvation in fixed-rate systems: Leaky bucket algorithms process requests at a constant rate, which means late-arriving legitimate requests can wait indefinitely if the queue stays full. This creates unpredictable delays for users who happen to make requests during peak times, even if they're well within their personal quotas.

- Geographic distribution latency: Geography-based rate limiting needs to track usage across regions and decide when to forward requests to distant servers. The time it takes to check limits and route traffic can add 50 to 200 milliseconds of latency, degrading user experience for applications that need sub-100ms response times.

- Granularity versus performance tradeoffs: Fine-grained limits per user, endpoint, and time window provide better control but require more memory and processing power. A system tracking 100,000 users across 50 endpoints with per-second limits needs to maintain and update five million counters constantly.

- Burst handling complexity: Real user behavior includes legitimate bursts. Someone refreshing a page multiple times or an application making several API calls in quick succession. Token bucket algorithms handle this by allowing temporary bursts, but tuning the bucket size and refill rate requires extensive testing to avoid punishing normal usage patterns.

- Cost optimization conflicts: Auto-scaling based on load works against rate limiting goals. When traffic increases, you want to add servers to handle legitimate growth, but you also need to detect and block attack traffic before it triggers expensive scaling events that could cost thousands per hour.

How can Gcore help with rate limiting?

Gcore helps with rate limiting through edge-based protection that runs across 210+ global PoPs, letting you enforce request quotas before traffic reaches your origin servers. The Web Application Firewall and DDoS protection tools give you granular control over request rates per IP, geographic region, or API endpoint, all configured through a single dashboard.

Here's what makes this approach effective: enforcement happens at the edge, within 30 ms of your users, so legitimate requests aren't caught in bottlenecks while malicious traffic gets blocked closer to its source. You can set different limits for different endpoints, combine rate limiting with bot detection to catch credential stuffing attempts, and adjust thresholds in real time as traffic patterns shift.

The Gcore platform handles the complexity of distributed rate limiting for you, so you don't need to worry about synchronizing counters across multiple servers or managing token buckets manually. The system scales automatically during traffic spikes, whether that's a product launch or a DDoS attack.

Explore Gcore's application security solutions at gcore.com/waap.

Frequently asked questions

What's the difference between rate limiting and load balancing?

Rate limiting controls how many requests a client can send, while load balancing distributes incoming traffic across multiple servers. Rate limiting protects individual servers from overload, whereas load balancing ensures no single server handles too much traffic.

How much does rate limiting impact server performance?

Rate limiting adds minimal overhead, typically consuming less than 1% of server resources when properly implemented. The performance gain from preventing overload far outweighs the computational cost of checking request counts against thresholds.

Is rate limiting effective against advanced attacks?

No, rate limiting alone isn't effective against advanced attacks like distributed botnets or credential stuffing from multiple IP addresses. It works best as one layer in a defense strategy that includes behavioral analysis, CAPTCHA challenges, and geographic filtering to detect and block coordinated threats.

What happens when legitimate users hit rate limits?

When legitimate users hit rate limits, they typically receive an HTTP 429 "Too Many Requests" error and must wait before making additional requests. Most systems include retry-after headers indicating when the user can resume, and well-designed rate limiting implements algorithms like token bucket to allow brief bursts while protecting against sustained overload.

How do you set optimal rate limiting thresholds?

Set optimal thresholds by analyzing baseline traffic patterns during normal and peak periods, then adding a 20% to 30% buffer to accommodate legitimate spikes. Test thresholds with different user segments and adjust based on real-world metrics like error rates and user complaints.

Can rate limiting be bypassed by attackers?

Yes, attackers can bypass basic rate limiting through techniques like distributed attacks from multiple IP addresses, IP rotation, or exploiting poorly configured thresholds. Effective defense requires layered protection combining rate limiting with CAPTCHA challenges, device fingerprinting, behavioral analysis, and geographic filtering to detect and block advanced bypass attempts.

What are the legal considerations for rate limiting?

Rate limiting must comply with terms of service and acceptable use policies, as overly restrictive limits could breach contracts guaranteeing service availability or API access levels. Organizations should also consider accessibility laws, ensuring rate limits don't disproportionately block legitimate users with assistive technologies that generate higher request volumes.

Related articles

Subscribe to our newsletter

Get the latest industry trends, exclusive insights, and Gcore updates delivered straight to your inbox.